編輯:Android資訊

roid 6.0的源碼剖析, 本文深度剖析Binder IPC過程, 這絕對是一篇匠心巨作,從Java framework到Native,再到Linux Kernel,帶你全程看Binder通信過程.

Android內核是基於Linux系統, 而Linux現存多種進程間IPC方式:管道, 消息隊列, 共享內存, 套接字, 信號量, 信號. 為什麼Android非要用Binder來進行進程間通信呢.

從我個人的理解角度, 曾嘗試著在知乎回答同樣一個問題 為什麼Android要采用Binder作為IPC機制?.

這是我第一次認認真真地在知乎上回答問題, 收到很多網友的點贊與回復, 讓我很受鼓舞, 也決心分享更多優先地文章回報讀者和粉絲, 為Android圈貢獻自己的微薄之力.

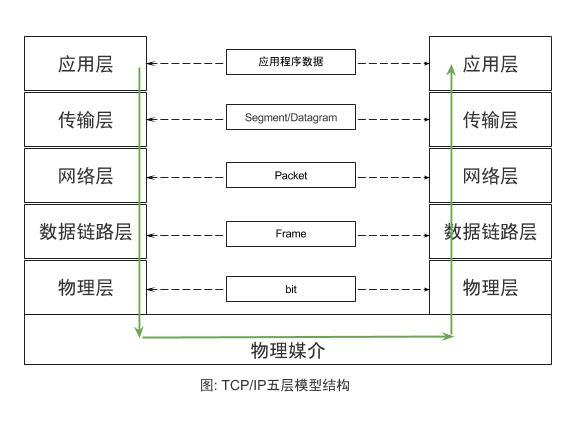

在說到Binder架構之前, 先簡單說說大家熟悉的TCP/IP的五層通信體系結構:

這是經典的五層TPC/IP協議體系, 這樣分層設計的思想, 讓每一個子問題都設計成一個獨立的協議, 這協議的設計/分析/實現/測試都變得更加簡單:

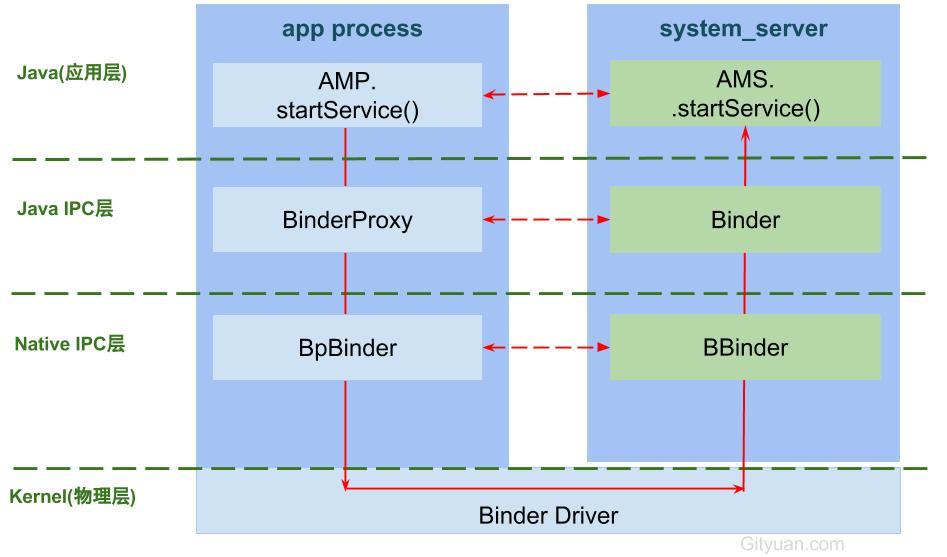

Binder架構也是采用分層架構設計, 每一層都有其不同的功能:

前面通過一個Binder系列-開篇來從源碼講解了Binder的各個層面, 但是Binder牽涉頗為廣泛, 幾乎是整個Android架構的頂梁柱, 雖說用了十幾篇文章來闡述Binder的各個過程.

但依然還是沒有將Binder IPC(進程間通信)的過程徹底說透.

Binder系統如此龐大, 那麼這裡需要尋求一個出發點來穿針引線, 一窺視Binder全貌. 那麼本文將從全新的視角,以startService流程分析為例子來說說Binder所其作用.

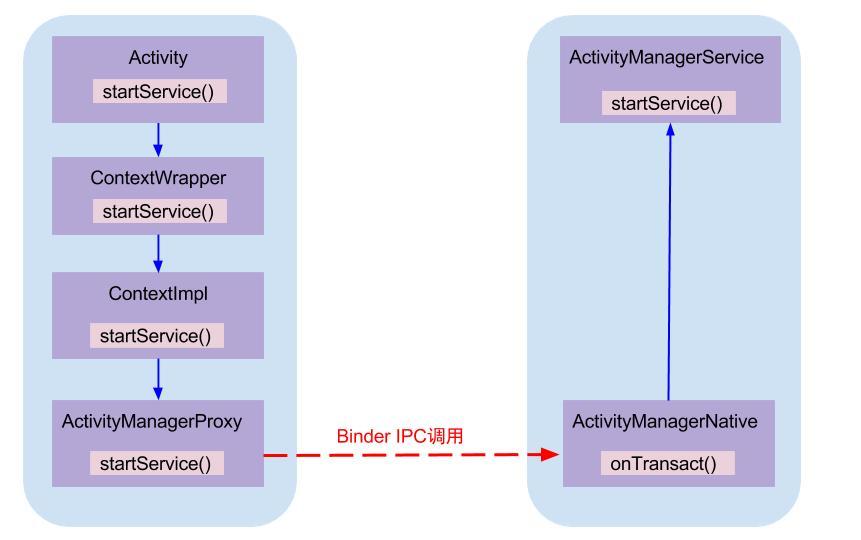

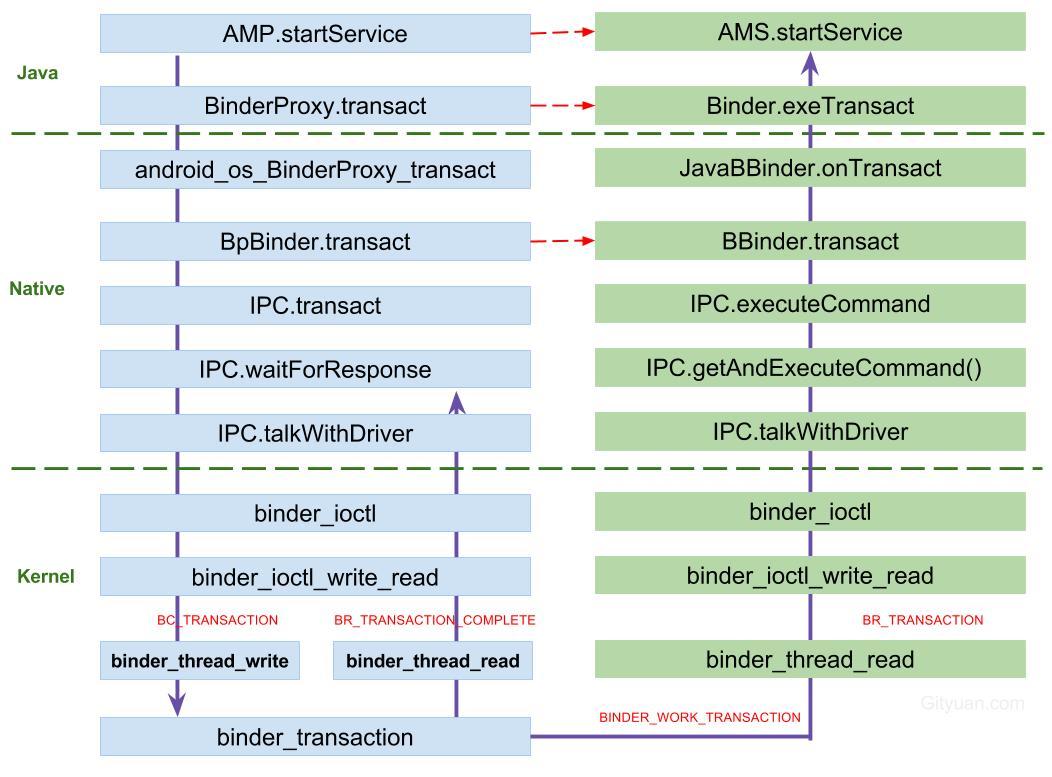

首先在發起方進程調用AMP.startService,經過binder驅動,最終調用系統進程AMS.startService,如下圖:

AMP和AMN都是實現了IActivityManager接口,AMS繼承於AMN. 其中AMP作為Binder的客戶端,運行在各個app所在進程, AMN(或AMS)運行在系統進程system_server.

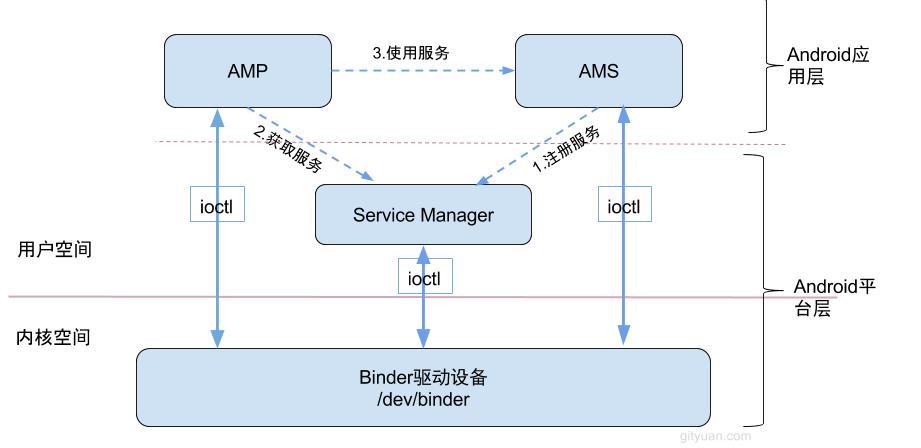

Binder通信采用C/S架構,從組件視角來說,包含Client、Server、ServiceManager以及binder驅動,其中ServiceManager用於管理系統中的各種服務。下面說說startService過程所涉及的Binder對象的架構圖:

可以看出無論是注冊服務和獲取服務的過程都需要ServiceManager,需要注意的是此處的Service Manager是指Native層的ServiceManager(C++),並非指framework層的ServiceManager(Java)。ServiceManager是整個Binder通信機制的大管家,是Android進程間通信機制Binder的守護進程,Client端和Server端通信時都需要先獲取Service Manager接口,才能開始通信服務, 當然查找懂啊目標信息可以緩存起來則不需要每次都向ServiceManager請求。

圖中Client/Server/ServiceManage之間的相互通信都是基於Binder機制。既然基於Binder機制通信,那麼同樣也是C/S架構,則圖中的3大步驟都有相應的Client端與Server端。

圖中的Client,Server,Service Manager之間交互都是虛線表示,是由於它們彼此之間不是直接交互的,而是都通過與Binder Driver進行交互的,從而實現IPC通信方式。其中Binder驅動位於內核空間,Client,Server,Service Manager位於用戶空間。Binder驅動和Service Manager可以看做是Android平台的基礎架構,而Client和Server是Android的應用層.

這3大過程每一次都是一個完整的Binder IPC過程, 接下來從源碼角度, 僅介紹第3過程使用服務, 即展開AMP.startService是如何調用到AMS.startService的過程.

Tips: 如果你只想了解大致過程,並不打算細扣源碼, 那麼你可以略過通信過程源碼分析, 僅看本文第一段落和最後段落也能對Binder所有理解.

[-> ActivityManagerNative.java ::ActivityManagerProxy]

public ComponentName startService(IApplicationThread caller, Intent service,

String resolvedType, String callingPackage, int userId) throws RemoteException

{

//獲取或創建Parcel對象【見小節2.2】

Parcel data = Parcel.obtain();

Parcel reply = Parcel.obtain();

data.writeInterfaceToken(IActivityManager.descriptor);

data.writeStrongBinder(caller != null ? caller.asBinder() : null);

service.writeToParcel(data, 0);

//寫入Parcel數據 【見小節2.3】

data.writeString(resolvedType);

data.writeString(callingPackage);

data.writeInt(userId);

//通過Binder傳遞數據【見小節2.5】

mRemote.transact(START_SERVICE_TRANSACTION, data, reply, 0);

//讀取應答消息的異常情況

reply.readException();

//根據reply數據來創建ComponentName對象

ComponentName res = ComponentName.readFromParcel(reply);

//【見小節2.2.3】

data.recycle();

reply.recycle();

return res;

}

主要功能:

[-> Parcel.java]

public static Parcel obtain() {

final Parcel[] pool = sOwnedPool;

synchronized (pool) {

Parcel p;

//POOL_SIZE = 6

for (int i=0; i<POOL_SIZE; i++) {

p = pool[i];

if (p != null) {

pool[i] = null;

return p;

}

}

}

//當緩存池沒有現成的Parcel對象,則直接創建[見流程2.2.1]

return new Parcel(0);

}

sOwnedPool是一個大小為6,存放著parcel對象的緩存池,這樣設計的目標是用於節省每次都創建Parcel對象的開銷。obtain()方法的作用:

sOwnedPool中查詢是否存在緩存Parcel對象,當存在則直接返回該對象;[-> Parcel.java]

private Parcel(long nativePtr) {

//初始化本地指針

init(nativePtr);

}

private void init(long nativePtr) {

if (nativePtr != 0) {

mNativePtr = nativePtr;

mOwnsNativeParcelObject = false;

} else {

// 首次創建,進入該分支[見流程2.2.2]

mNativePtr = nativeCreate();

mOwnsNativeParcelObject = true;

}

}

nativeCreate這是native方法,經過JNI進入native層, 調用android_os_Parcel_create()方法.

[-> android_os_Parcel.cpp]

static jlong android_os_Parcel_create(JNIEnv* env, jclass clazz)

{

Parcel* parcel = new Parcel();

return reinterpret_cast<jlong>(parcel);

}

創建C++層的Parcel對象, 該對象指針強制轉換為long型, 並保存到Java層的mNativePtr對象. 創建完Parcel對象利用Parcel對象寫數據. 接下來以writeString為例.

public final void recycle() {

//釋放native parcel對象

freeBuffer();

final Parcel[] pool;

//根據情況來選擇加入相應池

if (mOwnsNativeParcelObject) {

pool = sOwnedPool;

} else {

mNativePtr = 0;

pool = sHolderPool;

}

synchronized (pool) {

for (int i=0; i<POOL_SIZE; i++) {

if (pool[i] == null) {

pool[i] = this;

return;

}

}

}

}

將不再使用的Parcel對象放入緩存池,可回收重復利用,當緩存池已滿則不再加入緩存池。這裡有兩個Parcel線程池,mOwnsNativeParcelObject變量來決定:

mOwnsNativeParcelObject=true, 即調用不帶參數obtain()方法獲取的對象, 回收時會放入sOwnedPool對象池;mOwnsNativeParcelObject=false, 即調用帶nativePtr參數的obtain(long)方法獲取的對象, 回收時會放入sHolderPool對象池;[-> Parcel.java]

public final void writeString(String val) {

//[見流程2.3.1]

nativeWriteString(mNativePtr, val);

}

[-> android_os_Parcel.cpp]

static void android_os_Parcel_writeString(JNIEnv* env, jclass clazz, jlong nativePtr, jstring val)

{

Parcel* parcel = reinterpret_cast<Parcel*>(nativePtr);

if (parcel != NULL) {

status_t err = NO_MEMORY;

if (val) {

const jchar* str = env->GetStringCritical(val, 0);

if (str) {

//[見流程2.3.2]

err = parcel->writeString16(

reinterpret_cast<const char16_t*>(str),

env->GetStringLength(val));

env->ReleaseStringCritical(val, str);

}

} else {

err = parcel->writeString16(NULL, 0);

}

if (err != NO_ERROR) {

signalExceptionForError(env, clazz, err);

}

}

}

[-> Parcel.cpp]

status_t Parcel::writeString16(const char16_t* str, size_t len)

{

if (str == NULL) return writeInt32(-1);

status_t err = writeInt32(len);

if (err == NO_ERROR) {

len *= sizeof(char16_t);

uint8_t* data = (uint8_t*)writeInplace(len+sizeof(char16_t));

if (data) {

//數據拷貝到data所指向的位置

memcpy(data, str, len);

*reinterpret_cast<char16_t*>(data+len) = 0;

return NO_ERROR;

}

err = mError;

}

return err;

}

Tips: 除了writeString(),在Parcel.java中大量的native方法, 都是調用android_os_Parcel.cpp相對應的方法, 該方法再調用Parcel.cpp中對應的方法.

調用流程: Parcel.java –> android_os_Parcel.cpp –> Parcel.cpp.

/frameworks/base/core/java/android/os/Parcel.java

/frameworks/base/core/jni/android_os_Parcel.cpp

/frameworks/native/libs/binder/Parcel.cpp

簡單說,就是

mRemote的出生,要出先說說ActivityManagerProxy對象(簡稱AMP)創建說起, AMP是通過ActivityManagerNative.getDefault()來獲取的.

[-> ActivityManagerNative.java]

static public IActivityManager getDefault() {

// [見流程2.4.2]

return gDefault.get();

}

gDefault的數據類型為Singleton<IActivityManager>, 這是一個單例模式, 接下來看看Singleto.get()的過程

public abstract class Singleton<IActivityManager> {

public final IActivityManager get() {

synchronized (this) {

if (mInstance == null) {

//首次調用create()來獲取AMP對象[見流程2.4.3]

mInstance = create();

}

return mInstance;

}

}

}

首次調用時需要創建,創建完之後保持到mInstance對象,之後可直接使用.

private static final Singleton<IActivityManager> gDefault = new Singleton<IActivityManager>() {

protected IActivityManager create() {

//獲取名為"activity"的服務

IBinder b = ServiceManager.getService("activity");

//創建AMP對象[見流程2.4.4]

IActivityManager am = asInterface(b);

return am;

}

};

文章Binder系列7—framework層分析,可知ServiceManager.getService(“activity”)返回的是指向目標服務AMS的代理對象BinderProxy對象,由該代理對象可以找到目標服務AMS所在進程

[-> ActivityManagerNative.java]

public abstract class ActivityManagerNative extends Binder implements IActivityManager

{

static public IActivityManager asInterface(IBinder obj) {

if (obj == null) {

return null;

}

//此處obj = BinderProxy, descriptor = "android.app.IActivityManager"; [見流程2.4.5]

IActivityManager in = (IActivityManager)obj.queryLocalInterface(descriptor);

if (in != null) { //此處為null

return in;

}

//[見流程2.4.6]

return new ActivityManagerProxy(obj);

}

...

}

此時obj為BinderProxy對象, 記錄著遠程進程system_server中AMS服務的binder線程的handle.

[Binder.java]

public class Binder implements IBinder {

//對於Binder對象的調用,則返回值不為空

public IInterface queryLocalInterface(String descriptor) {

//mDescriptor的初始化在attachInterface()過程中賦值

if (mDescriptor.equals(descriptor)) {

return mOwner;

}

return null;

}

}

//由上一小節[2.4.4]調用的流程便是此處,返回Null

final class BinderProxy implements IBinder {

//BinderProxy對象的調用, 則返回值為空

public IInterface queryLocalInterface(String descriptor) {

return null;

}

}

對於Binder IPC的過程中, 同一個進程的調用則會是asInterface()方法返回的便是本地的Binder對象;對於不同進程的調用則會是遠程代理對象BinderProxy.

[-> ActivityManagerNative.java :: AMP]

class ActivityManagerProxy implements IActivityManager

{

public ActivityManagerProxy(IBinder remote)

{

mRemote = remote;

}

}

可知mRemote便是指向AMS服務的BinderProxy對象。

[-> Binder.java ::BinderProxy]

final class BinderProxy implements IBinder {

public boolean transact(int code, Parcel data, Parcel reply, int flags) throws RemoteException {

//用於檢測Parcel大小是否大於800k

Binder.checkParcel(this, code, data, "Unreasonably large binder buffer");

//【見2.6】

return transactNative(code, data, reply, flags);

}

}

mRemote.transact()方法中的code=START_SERVICE_TRANSACTION, data保存了descriptor,caller, intent, resolvedType, callingPackage, userId這6項信息。

transactNative是native方法,經過jni調用android_os_BinderProxy_transact方法。

[-> android_util_Binder.cpp]

static jboolean android_os_BinderProxy_transact(JNIEnv* env, jobject obj,

jint code, jobject dataObj, jobject replyObj, jint flags)

{

...

//將java Parcel轉為c++ Parcel

Parcel* data = parcelForJavaObject(env, dataObj);

Parcel* reply = parcelForJavaObject(env, replyObj);

//gBinderProxyOffsets.mObject中保存的是new BpBinder(handle)對象

IBinder* target = (IBinder*) env->GetLongField(obj, gBinderProxyOffsets.mObject);

...

//此處便是BpBinder::transact()【見小節2.7】

status_t err = target->transact(code, *data, reply, flags);

...

//最後根據transact執行具體情況,拋出相應的Exception

signalExceptionForError(env, obj, err, true , data->dataSize());

return JNI_FALSE;

}

gBinderProxyOffsets.mObject中保存的是BpBinder對象, 這是開機時Zygote調用AndroidRuntime::startReg方法來完成jni方法的注冊.

其中register_android_os_Binder()過程就有一個初始並注冊BinderProxy的操作,完成gBinderProxyOffsets的賦值過程. 接下來就進入該方法.

[-> BpBinder.cpp]

status_t BpBinder::transact(

uint32_t code, const Parcel& data, Parcel* reply, uint32_t flags)

{

if (mAlive) {

// 【見小節2.8】

status_t status = IPCThreadState::self()->transact(

mHandle, code, data, reply, flags);

if (status == DEAD_OBJECT) mAlive = 0;

return status;

}

return DEAD_OBJECT;

}

IPCThreadState::self()采用單例模式,保證每個線程只有一個實例對象。

[-> IPCThreadState.cpp]

status_t IPCThreadState::transact(int32_t handle,

uint32_t code, const Parcel& data,

Parcel* reply, uint32_t flags)

{

status_t err = data.errorCheck(); //數據錯誤檢查

flags |= TF_ACCEPT_FDS;

....

if (err == NO_ERROR) {

// 傳輸數據 【見小節2.9】

err = writeTransactionData(BC_TRANSACTION, flags, handle, code, data, NULL);

}

if (err != NO_ERROR) {

if (reply) reply->setError(err);

return (mLastError = err);

}

// 默認情況下,都是采用非oneway的方式, 也就是需要等待服務端的返回結果

if ((flags & TF_ONE_WAY) == 0) {

if (reply) {

//reply對象不為空 【見小節2.10】

err = waitForResponse(reply);

}else {

Parcel fakeReply;

err = waitForResponse(&fakeReply);

}

} else {

err = waitForResponse(NULL, NULL);

}

return err;

}

transact主要過程:

mOut寫入數據,此時mIn還沒有數據;mIn, 則根據收到的不同響應嗎,執行相應的操作。此處調用waitForResponse根據是否有設置TF_ONE_WAY的標記:

[-> IPCThreadState.cpp]

status_t IPCThreadState::writeTransactionData(int32_t cmd, uint32_t binderFlags,

int32_t handle, uint32_t code, const Parcel& data, status_t* statusBuffer)

{

binder_transaction_data tr;

tr.target.ptr = 0;

tr.target.handle = handle; // handle指向AMS

tr.code = code; // START_SERVICE_TRANSACTION

tr.flags = binderFlags; // 0

tr.cookie = 0;

tr.sender_pid = 0;

tr.sender_euid = 0;

const status_t err = data.errorCheck();

if (err == NO_ERROR) {

// data為startService相關信息

tr.data_size = data.ipcDataSize(); // mDataSize

tr.data.ptr.buffer = data.ipcData(); // mData指針

tr.offsets_size = data.ipcObjectsCount()*sizeof(binder_size_t); //mObjectsSize

tr.data.ptr.offsets = data.ipcObjects(); //mObjects指針

}

...

mOut.writeInt32(cmd); //cmd = BC_TRANSACTION

mOut.write(&tr, sizeof(tr)); //寫入binder_transaction_data數據

return NO_ERROR;

}

將數據寫入mOut

status_t IPCThreadState::waitForResponse(Parcel *reply, status_t *acquireResult)

{

int32_t cmd;

int32_t err;

while (1) {

if ((err=talkWithDriver()) < NO_ERROR) break; // 【見小節2.11】

err = mIn.errorCheck();

if (err < NO_ERROR) break; //當存在error則退出循環

if (mIn.dataAvail() == 0) continue; //mIn有數據則往下執行

cmd = mIn.readInt32();

switch (cmd) {

case BR_TRANSACTION_COMPLETE: ... goto finish;

case BR_DEAD_REPLY: ... goto finish;

case BR_FAILED_REPLY: ... goto finish;

case BR_REPLY: ... goto finish;

default:

err = executeCommand(cmd); //【見小節2.10.1】

if (err != NO_ERROR) goto finish;

break;

}

}

finish:

if (err != NO_ERROR) {

if (reply) reply->setError(err); //將發送的錯誤代碼返回給最初的調用者

}

return err;

}

在這個過程中, 常見的幾個BR_命令:

規律: BC_TRANSACTION + BC_REPLY = BR_TRANSACTION_COMPLETE + BR_DEAD_REPLY + BR_FAILED_REPLY

status_t IPCThreadState::executeCommand(int32_t cmd)

{

BBinder* obj;

RefBase::weakref_type* refs;

status_t result = NO_ERROR;

switch ((uint32_t)cmd) {

case BR_ERROR: ...

case BR_OK: ...

case BR_ACQUIRE: ...

case BR_RELEASE: ...

case BR_INCREFS: ...

case BR_TRANSACTION: ... //Binder驅動向Server端發送消息

case BR_DEAD_BINDER: ...

case BR_CLEAR_DEATH_NOTIFICATION_DONE: ...

case BR_NOOP: ...

case BR_SPAWN_LOOPER: ... //創建新binder線程

default: ...

}

}

處於剩余的BR_命令.

//mOut有數據,mIn還沒有數據。doReceive默認值為true

status_t IPCThreadState::talkWithDriver(bool doReceive)

{

binder_write_read bwr;

const bool needRead = mIn.dataPosition() >= mIn.dataSize();

const size_t outAvail = (!doReceive || needRead) ? mOut.dataSize() : 0;

bwr.write_size = outAvail;

bwr.write_buffer = (uintptr_t)mOut.data();

if (doReceive && needRead) {

//接收數據緩沖區信息的填充。當收到驅動的數據,則寫入mIn

bwr.read_size = mIn.dataCapacity();

bwr.read_buffer = (uintptr_t)mIn.data();

} else {

bwr.read_size = 0;

bwr.read_buffer = 0;

}

// 當同時沒有輸入和輸出數據則直接返回

if ((bwr.write_size == 0) && (bwr.read_size == 0)) return NO_ERROR;

bwr.write_consumed = 0;

bwr.read_consumed = 0;

status_t err;

do {

//ioctl不停的讀寫操作,經過syscall,進入Binder驅動。調用Binder_ioctl【小節3.1】

if (ioctl(mProcess->mDriverFD, BINDER_WRITE_READ, &bwr) >= 0)

err = NO_ERROR;

else

err = -errno;

...

} while (err == -EINTR);

if (err >= NO_ERROR) {

if (bwr.write_consumed > 0) {

if (bwr.write_consumed < mOut.dataSize())

mOut.remove(0, bwr.write_consumed);

else

mOut.setDataSize(0);

}

if (bwr.read_consumed > 0) {

mIn.setDataSize(bwr.read_consumed);

mIn.setDataPosition(0);

}

return NO_ERROR;

}

return err;

}

binder_write_read結構體用來與Binder設備交換數據的結構, 通過ioctl與mDriverFD通信,是真正與Binder驅動進行數據讀寫交互的過程。 ioctl()方法經過syscall最終調用到Binder_ioctl()方法.

[-> Binder.c]

由【小節2.11】傳遞過出來的參數 cmd=BINDER_WRITE_READ

static long binder_ioctl(struct file *filp, unsigned int cmd, unsigned long arg)

{

int ret;

struct binder_proc *proc = filp->private_data;

struct binder_thread *thread;

//當binder_stop_on_user_error>=2時,則該線程加入等待隊列並進入休眠狀態. 該值默認為0

ret = wait_event_interruptible(binder_user_error_wait, binder_stop_on_user_error < 2);

...

binder_lock(__func__);

//查找或創建binder_thread結構體

thread = binder_get_thread(proc);

...

switch (cmd) {

case BINDER_WRITE_READ:

//【見小節3.2】

ret = binder_ioctl_write_read(filp, cmd, arg, thread);

break;

...

}

ret = 0;

err:

if (thread)

thread->looper &= ~BINDER_LOOPER_STATE_NEED_RETURN;

binder_unlock(__func__);

wait_event_interruptible(binder_user_error_wait, binder_stop_on_user_error < 2);

return ret;

}

首先,根據傳遞過來的文件句柄指針獲取相應的binder_proc結構體, 再從中查找binder_thread,如果當前線程已經加入到proc的線程隊列則直接返回,

如果不存在則創建binder_thread,並將當前線程添加到當前的proc.

static int binder_ioctl_write_read(struct file *filp,

unsigned int cmd, unsigned long arg,

struct binder_thread *thread)

{

int ret = 0;

struct binder_proc *proc = filp->private_data;

unsigned int size = _IOC_SIZE(cmd);

void __user *ubuf = (void __user *)arg;

struct binder_write_read bwr;

if (size != sizeof(struct binder_write_read)) {

ret = -EINVAL;

goto out;

}

//將用戶空間bwr結構體拷貝到內核空間

if (copy_from_user(&bwr, ubuf, sizeof(bwr))) {

ret = -EFAULT;

goto out;

}

if (bwr.write_size > 0) {

//將數據放入目標進程【見小節3.3】

ret = binder_thread_write(proc, thread,

bwr.write_buffer,

bwr.write_size,

&bwr.write_consumed);

//當執行失敗,則直接將內核bwr結構體寫回用戶空間,並跳出該方法

if (ret < 0) {

bwr.read_consumed = 0;

if (copy_to_user_preempt_disabled(ubuf, &bwr, sizeof(bwr)))

ret = -EFAULT;

goto out;

}

}

if (bwr.read_size > 0) {

//讀取自己隊列的數據 【見小節3.5】

ret = binder_thread_read(proc, thread, bwr.read_buffer,

bwr.read_size,

&bwr.read_consumed,

filp->f_flags & O_NONBLOCK);

//當進程的todo隊列有數據,則喚醒在該隊列等待的進程

if (!list_empty(&proc->todo))

wake_up_interruptible(&proc->wait);

//當執行失敗,則直接將內核bwr結構體寫回用戶空間,並跳出該方法

if (ret < 0) {

if (copy_to_user_preempt_disabled(ubuf, &bwr, sizeof(bwr)))

ret = -EFAULT;

goto out;

}

}

if (copy_to_user(ubuf, &bwr, sizeof(bwr))) {

ret = -EFAULT;

goto out;

}

out:

return ret;

}

此時arg是一個binder_write_read結構體,mOut數據保存在write_buffer,所以write_size>0,但此時read_size=0。首先,將用戶空間bwr結構體拷貝到內核空間,然後執行binder_thread_write()操作.

static int binder_thread_write(struct binder_proc *proc,

struct binder_thread *thread,

binder_uintptr_t binder_buffer, size_t size,

binder_size_t *consumed)

{

uint32_t cmd;

void __user *buffer = (void __user *)(uintptr_t)binder_buffer;

void __user *ptr = buffer + *consumed;

void __user *end = buffer + size;

while (ptr < end && thread->return_error == BR_OK) {

//拷貝用戶空間的cmd命令,此時為BC_TRANSACTION

if (get_user(cmd, (uint32_t __user *)ptr)) -EFAULT;

ptr += sizeof(uint32_t);

switch (cmd) {

case BC_TRANSACTION:

case BC_REPLY: {

struct binder_transaction_data tr;

//拷貝用戶空間的binder_transaction_data

if (copy_from_user(&tr, ptr, sizeof(tr))) return -EFAULT;

ptr += sizeof(tr);

// 見小節3.4】

binder_transaction(proc, thread, &tr, cmd == BC_REPLY);

break;

}

...

}

*consumed = ptr - buffer;

}

return 0;

}

不斷從binder_buffer所指向的地址獲取cmd, 當只有BC_TRANSACTION或者BC_REPLY時, 則調用binder_transaction()來處理事務.

發送的是BC_TRANSACTION時,此時reply=0。

static void binder_transaction(struct binder_proc *proc,

struct binder_thread *thread,

struct binder_transaction_data *tr, int reply){

struct binder_transaction *t;

struct binder_work *tcomplete;

binder_size_t *offp, *off_end;

binder_size_t off_min;

struct binder_proc *target_proc;

struct binder_thread *target_thread = NULL;

struct binder_node *target_node = NULL;

struct list_head *target_list;

wait_queue_head_t *target_wait;

struct binder_transaction *in_reply_to = NULL;

if (reply) {

...

}else {

if (tr->target.handle) {

struct binder_ref *ref;

// 由handle 找到相應 binder_ref, 由binder_ref 找到相應 binder_node

ref = binder_get_ref(proc, tr->target.handle);

target_node = ref->node;

} else {

target_node = binder_context_mgr_node;

}

// 由binder_node 找到相應 binder_proc

target_proc = target_node->proc;

}

if (target_thread) {

e->to_thread = target_thread->pid;

target_list = &target_thread->todo;

target_wait = &target_thread->wait;

} else {

//首次執行target_thread為空

target_list = &target_proc->todo;

target_wait = &target_proc->wait;

}

t = kzalloc(sizeof(*t), GFP_KERNEL);

tcomplete = kzalloc(sizeof(*tcomplete), GFP_KERNEL);

//非oneway的通信方式,把當前thread保存到transaction的from字段

if (!reply && !(tr->flags & TF_ONE_WAY))

t->from = thread;

else

t->from = NULL;

t->sender_euid = task_euid(proc->tsk);

t->to_proc = target_proc; //此次通信目標進程為system_server

t->to_thread = target_thread;

t->code = tr->code; //此次通信code = START_SERVICE_TRANSACTION

t->flags = tr->flags; // 此次通信flags = 0

t->priority = task_nice(current);

//從目標進程中分配內存空間

t->buffer = binder_alloc_buf(target_proc, tr->data_size,

tr->offsets_size, !reply && (t->flags & TF_ONE_WAY));

t->buffer->allow_user_free = 0;

t->buffer->transaction = t;

t->buffer->target_node = target_node;

if (target_node)

binder_inc_node(target_node, 1, 0, NULL); //引用計數加1

offp = (binder_size_t *)(t->buffer->data + ALIGN(tr->data_size, sizeof(void *)));

//分別拷貝用戶空間的binder_transaction_data中ptr.buffer和ptr.offsets到內核

copy_from_user(t->buffer->data, (const void __user *)(uintptr_t)tr->data.ptr.buffer, tr->data_size);

copy_from_user(offp, (const void __user *)(uintptr_t)tr->data.ptr.offsets, tr->offsets_size);

off_end = (void *)offp + tr->offsets_size;

for (; offp < off_end; offp++) {

struct flat_binder_object *fp;

fp = (struct flat_binder_object *)(t->buffer->data + *offp);

off_min = *offp + sizeof(struct flat_binder_object);

switch (fp->type) {

...

case BINDER_TYPE_HANDLE:

case BINDER_TYPE_WEAK_HANDLE: {

//處理引用計數情況

struct binder_ref *ref = binder_get_ref(proc, fp->handle);

if (ref->node->proc == target_proc) {

if (fp->type == BINDER_TYPE_HANDLE)

fp->type = BINDER_TYPE_BINDER;

else

fp->type = BINDER_TYPE_WEAK_BINDER;

fp->binder = ref->node->ptr;

fp->cookie = ref->node->cookie;

binder_inc_node(ref->node, fp->type == BINDER_TYPE_BINDER, 0, NULL);

} else {

struct binder_ref *new_ref;

new_ref = binder_get_ref_for_node(target_proc, ref->node);

fp->handle = new_ref->desc;

binder_inc_ref(new_ref, fp->type == BINDER_TYPE_HANDLE, NULL);

}

} break;

...

default:

return_error = BR_FAILED_REPLY;

goto err_bad_object_type;

}

}

if (reply) {

binder_pop_transaction(target_thread, in_reply_to);

} else if (!(t->flags & TF_ONE_WAY)) {

//非reply 且 非oneway,則設置事務棧信息

t->need_reply = 1;

t->from_parent = thread->transaction_stack;

thread->transaction_stack = t;

} else {

//非reply 且 oneway,則加入異步todo隊列

if (target_node->has_async_transaction) {

target_list = &target_node->async_todo;

target_wait = NULL;

} else

target_node->has_async_transaction = 1;

}

//將新事務添加到目標隊列

t->work.type = BINDER_WORK_TRANSACTION;

list_add_tail(&t->work.entry, target_list);

//將BINDER_WORK_TRANSACTION_COMPLETE添加到當前線程的todo隊列

tcomplete->type = BINDER_WORK_TRANSACTION_COMPLETE;

list_add_tail(&tcomplete->entry, &thread->todo);

if (target_wait)

wake_up_interruptible(target_wait); //喚醒等待隊列

return;

}

主要功能:

1. 查詢目標進程的過程: handle -> binder_ref -> binder_node -> binder_proc

2. 將`BINDER_WORK_TRANSACTION`添加到目標隊列target_list, 首次發起事務則目標隊列為`target_proc->todo`, reply事務時則為`target_thread->todo`; oneway的非reply事務,則為`target_node->async_todo`.

3. 將`BINDER_WORK_TRANSACTION_COMPLETE`添加到當前線程的todo隊列

此時當前線程的todo隊列已經有事務, 接下來便會進入binder_thread_read()來處理相關的事務.

#### 3.5 binder_thread_read

```java

binder_thread_read(){

//當已使用字節數為0時,將BR_NOOP響應碼放入指針ptr

if (*consumed == 0) {

if (put_user(BR_NOOP, (uint32_t __user *)ptr))

return -EFAULT;

ptr += sizeof(uint32_t);

}

retry:

//todo隊列有數據,則為false

wait_for_proc_work = thread->transaction_stack == NULL &&

list_empty(&thread->todo);

if (wait_for_proc_work) {

if (non_block) {

...

} else

//當todo隊列沒有數據,則線程便在此處等待數據的到來

ret = wait_event_freezable_exclusive(proc->wait, binder_has_proc_work(proc, thread));

} else {

if (non_block) {

...

} else

//進入此分支, 當線程沒有todo隊列沒有數據, 則進入當前線程wait隊列等待

ret = wait_event_freezable(thread->wait, binder_has_thread_work(thread));

}

if (ret)

return ret; //對於非阻塞的調用,直接返回

while (1) {

uint32_t cmd;

struct binder_transaction_data tr;

struct binder_work *w;

struct binder_transaction *t = NULL;

//先考慮從線程todo隊列獲取事務數據

if (!list_empty(&thread->todo)) {

w = list_first_entry(&thread->todo, struct binder_work, entry);

// 線程todo隊列沒有數據, 則從進程todo對獲取事務數據

} else if (!list_empty(&proc->todo) && wait_for_proc_work) {

w = list_first_entry(&proc->todo, struct binder_work, entry);

} else {

//沒有數據,則返回retry

if (ptr - buffer == 4 &&

!(thread->looper & BINDER_LOOPER_STATE_NEED_RETURN))

goto retry;

break;

}

switch (w->type) {

case BINDER_WORK_TRANSACTION:

//獲取transaction數據

t = container_of(w, struct binder_transaction, work);

break;

case BINDER_WORK_TRANSACTION_COMPLETE:

cmd = BR_TRANSACTION_COMPLETE;

//將BR_TRANSACTION_COMPLETE寫入*ptr.

put_user(cmd, (uint32_t __user *)ptr);

list_del(&w->entry);

kfree(w);

break;

case BINDER_WORK_NODE: ... break;

case BINDER_WORK_DEAD_BINDER:

case BINDER_WORK_DEAD_BINDER_AND_CLEAR:

case BINDER_WORK_CLEAR_DEATH_NOTIFICATION: ... break;

}

if (!t)

continue; //只有BINDER_WORK_TRANSACTION命令才能繼續往下執行

if (t->buffer->target_node) {

//獲取目標node

struct binder_node *target_node = t->buffer->target_node;

tr.target.ptr = target_node->ptr;

tr.cookie = target_node->cookie;

t->saved_priority = task_nice(current);

...

cmd = BR_TRANSACTION; //設置命令為BR_TRANSACTION

} else {

tr.target.ptr = NULL;

tr.cookie = NULL;

cmd = BR_REPLY; //設置命令為BR_REPLY

}

tr.code = t->code;

tr.flags = t->flags;

tr.sender_euid = t->sender_euid;

if (t->from) {

struct task_struct *sender = t->from->proc->tsk;

tr.sender_pid = task_tgid_nr_ns(sender,

current->nsproxy->pid_ns);

} else {

tr.sender_pid = 0;

}

tr.data_size = t->buffer->data_size;

tr.offsets_size = t->buffer->offsets_size;

tr.data.ptr.buffer = (void *)t->buffer->data +

proc->user_buffer_offset;

tr.data.ptr.offsets = tr.data.ptr.buffer +

ALIGN(t->buffer->data_size,

sizeof(void *));

//將cmd和數據寫回用戶空間

if (put_user(cmd, (uint32_t __user *)ptr))

return -EFAULT;

ptr += sizeof(uint32_t);

if (copy_to_user(ptr, &tr, sizeof(tr)))

return -EFAULT;

ptr += sizeof(tr);

list_del(&t->work.entry);

t->buffer->allow_user_free = 1;

if (cmd == BR_TRANSACTION && !(t->flags & TF_ONE_WAY)) {

t->to_parent = thread->transaction_stack;

t->to_thread = thread;

thread->transaction_stack = t;

} else {

t->buffer->transaction = NULL;

kfree(t); //通信完成,則運行釋放

}

break;

}

done:

*consumed = ptr - buffer;

//當滿足請求線程加已准備線程數等於0,已啟動線程數小於最大線程數(15),

//且looper狀態為已注冊或已進入時創建新的線程。

if (proc->requested_threads + proc->ready_threads == 0 &&

proc->requested_threads_started < proc->max_threads &&

(thread->looper & (BINDER_LOOPER_STATE_REGISTERED |

BINDER_LOOPER_STATE_ENTERED))) {

proc->requested_threads++;

// 生成BR_SPAWN_LOOPER命令,用於創建新的線程

put_user(BR_SPAWN_LOOPER, (uint32_t __user *)buffer);

}

return 0;

}

BINDER_WORK_TRANSACTION_COMPLETE寫入當前線程.mIn.對於startService過程, 顯然沒有指定oneway的方式,那麼發起者進程還會繼續停留在waitForResponse()方法,等待收到BR_REPLY消息. 由於在前面binder_transaction過程中,除了向自己所在線程寫入了BINDER_WORK_TRANSACTION_COMPLETE, 還向目標進程(此處為system_server)寫入了BINDER_WORK_TRANSACTION命令. 而此時system_server進程的binder線程一旦空閒便是停留在binder_thread_read()方法來處理進程/線程新的事務, 收到的是BINDER_WORK_TRANSACTION命令, 經過binder_thread_read()後生成命令BR_TRANSACTION.同樣的流程.

接下來,從system_server的binder線程一直的執行流: IPC.joinThreadPool –> IPC.getAndExecuteCommand() -> IPC.talkWithDriver() ,但talkWithDriver收到事務之後, 便進入IPC.executeCommand(), 接下來,從executeCommand說起.

status_t IPCThreadState::executeCommand(int32_t cmd)

{

BBinder* obj;

RefBase::weakref_type* refs;

status_t result = NO_ERROR;

switch ((uint32_t)cmd) {

...

case BR_TRANSACTION:

{

binder_transaction_data tr;

result = mIn.read(&tr, sizeof(tr)); //讀取mIn數據

if (result != NO_ERROR) break;

Parcel buffer;

buffer.ipcSetDataReference(

reinterpret_cast<const uint8_t*>(tr.data.ptr.buffer),

tr.data_size,

reinterpret_cast<const binder_size_t*>(tr.data.ptr.offsets),

tr.offsets_size/sizeof(binder_size_t), freeBuffer, this);

const pid_t origPid = mCallingPid;

const uid_t origUid = mCallingUid;

const int32_t origStrictModePolicy = mStrictModePolicy;

const int32_t origTransactionBinderFlags = mLastTransactionBinderFlags;

mCallingPid = tr.sender_pid;

mCallingUid = tr.sender_euid;

mLastTransactionBinderFlags = tr.flags;

int curPrio = getpriority(PRIO_PROCESS, mMyThreadId);

if (gDisableBackgroundScheduling) {

... //不進入此分支

} else {

if (curPrio >= ANDROID_PRIORITY_BACKGROUND) {

set_sched_policy(mMyThreadId, SP_BACKGROUND);

}

}

Parcel reply;

status_t error;

if (tr.target.ptr) {

//嘗試通過弱引用獲取強引用

if (reinterpret_cast<RefBase::weakref_type*>(

tr.target.ptr)->attemptIncStrong(this)) {

// tr.cookie裡存放的是BBinder子類JavaBBinder [見流程4.3]

error = reinterpret_cast<BBinder*>(tr.cookie)->transact(tr.code, buffer,

&reply, tr.flags);

reinterpret_cast<BBinder*>(tr.cookie)->decStrong(this);

} else {

error = UNKNOWN_TRANSACTION;

}

} else {

error = the_context_object->transact(tr.code, buffer, &reply, tr.flags);

}

if ((tr.flags & TF_ONE_WAY) == 0) {

if (error < NO_ERROR) reply.setError(error);

sendReply(reply, 0);

}

...

}

break;

...

}

if (result != NO_ERROR) {

mLastError = result;

}

return result;

}

[-> Binder.cpp ::BBinder ]

status_t BBinder::transact(

uint32_t code, const Parcel& data, Parcel* reply, uint32_t flags)

{

data.setDataPosition(0);

status_t err = NO_ERROR;

switch (code) {

case PING_TRANSACTION:

reply->writeInt32(pingBinder());

break;

default:

err = onTransact(code, data, reply, flags); //【見流程4.4】

break;

}

if (reply != NULL) {

reply->setDataPosition(0);

}

return err;

}

[-> android_util_Binder.cpp]

virtual status_t onTransact(

uint32_t code, const Parcel& data, Parcel* reply, uint32_t flags = 0)

{

JNIEnv* env = javavm_to_jnienv(mVM);

IPCThreadState* thread_state = IPCThreadState::self();

//調用Binder.execTransact [見流程4.5]

jboolean res = env->CallBooleanMethod(mObject, gBinderOffsets.mExecTransact,

code, reinterpret_cast<jlong>(&data), reinterpret_cast<jlong>(reply), flags);

jthrowable excep = env->ExceptionOccurred();

if (excep) {

res = JNI_FALSE;

//發生異常, 則清理JNI本地引用

env->DeleteLocalRef(excep);

}

...

return res != JNI_FALSE ? NO_ERROR : UNKNOWN_TRANSACTION;

}

還記得AndroidRuntime::startReg過程嗎, 其中有一個過程便是register_android_os_Binder(),該過程會把gBinderOffsets.mExecTransact便是Binder.java中的execTransact()方法.詳見見Binder系列7—framework層分析文章中的第二節初始化的過程.

另外,此處mObject是在服務注冊addService過程,會調用writeStrongBinder方法, 將Binder對象傳入了JavaBBinder構造函數的參數, 最終賦值給mObject. 在本次通信過程中Object為ActivityManagerNative對象.

此處斗轉星移, 從C++代碼回到了Java代碼. 進入AMN.execTransact, 由於AMN繼續於Binder對象, 接下來進入Binder.execTransact

[Binder.java]

private boolean execTransact(int code, long dataObj, long replyObj,

int flags) {

Parcel data = Parcel.obtain(dataObj);

Parcel reply = Parcel.obtain(replyObj);

boolean res;

try {

// 調用子類AMN.onTransact方法 [見流程4.6]

res = onTransact(code, data, reply, flags);

} catch (RemoteException e) {

if ((flags & FLAG_ONEWAY) != 0) {

...

} else {

//非oneway的方式,則會將異常寫回reply

reply.setDataPosition(0);

reply.writeException(e);

}

res = true;

} catch (RuntimeException e) {

if ((flags & FLAG_ONEWAY) != 0) {

...

} else {

reply.setDataPosition(0);

reply.writeException(e);

}

res = true;

} catch (OutOfMemoryError e) {

RuntimeException re = new RuntimeException("Out of memory", e);

reply.setDataPosition(0);

reply.writeException(re);

res = true;

}

reply.recycle();

data.recycle();

return res;

}

當發生RemoteException, RuntimeException, OutOfMemoryError, 對於非oneway的情況下都會把異常傳遞給調用者.

[-> ActivityManagerNative.java]

public boolean onTransact(int code, Parcel data, Parcel reply, int flags)

throws RemoteException {

switch (code) {

...

case START_SERVICE_TRANSACTION: {

data.enforceInterface(IActivityManager.descriptor);

IBinder b = data.readStrongBinder();

//生成ApplicationThreadNative的代理對象,即ApplicationThreadProxy對象

IApplicationThread app = ApplicationThreadNative.asInterface(b);

Intent service = Intent.CREATOR.createFromParcel(data);

String resolvedType = data.readString();

String callingPackage = data.readString();

int userId = data.readInt();

//調用ActivityManagerService的startService()方法【見流程4.7】

ComponentName cn = startService(app, service, resolvedType, callingPackage, userId);

reply.writeNoException();

ComponentName.writeToParcel(cn, reply);

return true;

}

}

public ComponentName startService(IApplicationThread caller, Intent service,

String resolvedType, String callingPackage, int userId)

throws TransactionTooLargeException {

synchronized(this) {

...

ComponentName res = mServices.startServiceLocked(caller, service,

resolvedType, callingPid, callingUid, callingPackage, userId);

Binder.restoreCallingIdentity(origId);

return res;

}

}

歷經千山萬水, 總算是進入了AMS.startService. 當system_server收到BR_TRANSACTION的過程後, 再經歷一個類似的過程,將事件告知app所在進程service啟動完成.過程基本一致,此處就不再展開.

本文詳細地介紹如何從AMP.startService是如何通過Binder一步步調用進入到system_server進程的AMS.startService. 整個過程涉及Java framework, native, kernel driver各個層面知識. 僅僅一個Binder IPC調用, 就花費了如此大篇幅來講解, 可見系統之龐大. 整個過程的調用流程:

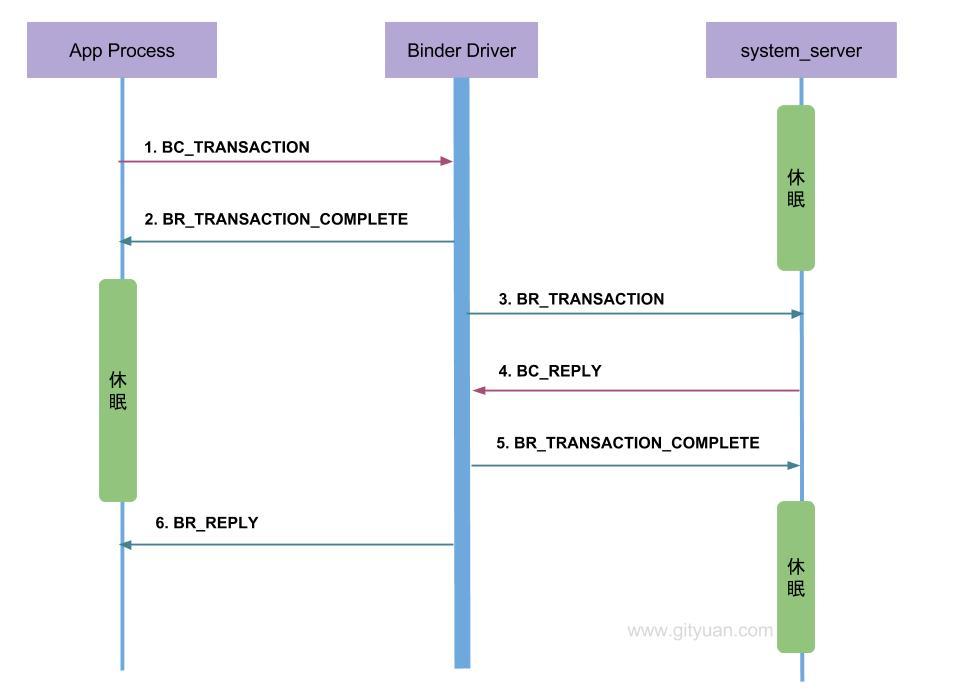

從通信流程角度來看整個過程:

前面第二至第四段落,主要講解過程 BC_TRANSACTION –> BR_TRANSACTION_COMPLETE –> BR_TRANSACTION.

有興趣的同學可以再看看後面3個事務的處理:BC_REPLY –> BR_TRANSACTION_COMPLETE –> BR_REPLY,這兩個流程基本是一致的.

從通信協議的角度來看這個過程:

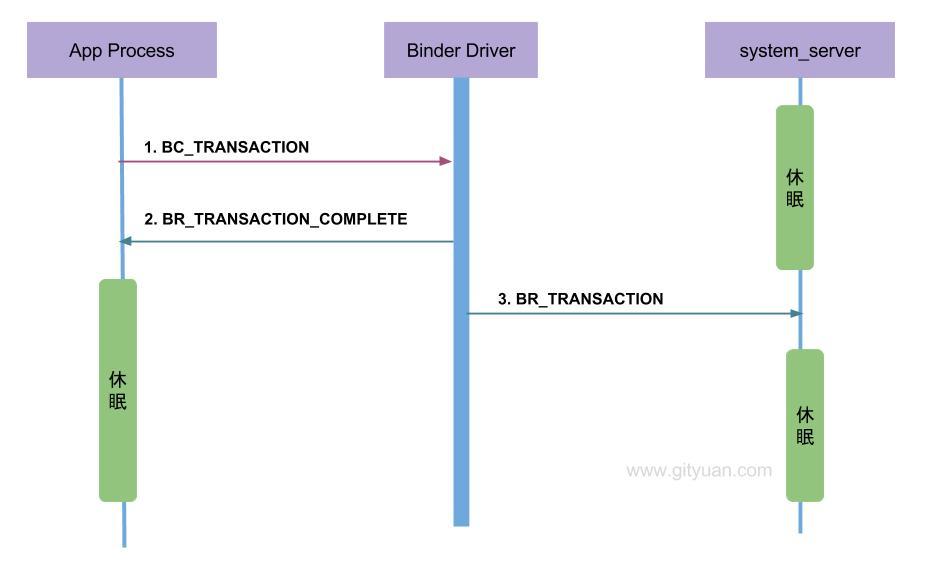

BC_TRANSACTION和BC_REPLY, 所有Binder Driver向Binder客戶端或者服務端發送的命令則都是以BR_開頭, 例如本文中的BR_TRANSACTION和BR_REPLY.BC_TRANSACTION或者BC_REPLY時, 才調用binder_transaction()來處理事務. 並且都會回應調用者一個BINDER_WORK_TRANSACTION_COMPLETE事務, 經過binder_thread_read()會轉變成BR_TRANSACTION_COMPLETE.上圖是非oneway通信過程的協議圖, 下圖則是對於oneway場景下的通信協議圖:

當收到BR_TRANSACTION_COMPLETE則程序返回,有人可能覺得好奇,為何oneway怎麼還要等待回應消息? 我舉個例子,你就明白了.

你(app進程)要給遠方的家人(system_server進程)郵寄一封信(transaction), 你需要通過郵寄員(Binder Driver)來完成.整個過程如下:

BC_TRANSACTION);BR_TRANSACTION_COMPLETE). 這樣你才放心知道郵遞員已確定接收信, 否則就這樣走了,信到底有沒有交到郵遞員手裡都不知道,這樣的通信實在太讓人不省心, 長時間收不到遠方家人的回信, 無法得知是在路的中途信件丟失呢,還是壓根就沒有交到郵遞員的手裡. 所以說oneway也得知道信是投遞狀態是否成功.BR_TRANSACTION);當你收到回執(BR_TRANSACTION_COMPLETE)時心裡也不期待家人回信, 那麼這便是一次oneway的通信過程.

如果你希望家人回信, 那便是非oneway的過程,在上述步驟2後並不是直接返回,而是繼續等待著收到家人的回信, 經歷前3個步驟之後繼續執行:

BC_REPLY;BR_TRANSACTION_COMPLETE)給你家人;BR_REPLY)這便是一次完成的非oneway通信過程.

oneway與非oneway: 都是需要等待Binder Driver的回應消息BR_TRANSACTION_COMPLETE. 主要區別在於oneway的通信收到BR_TRANSACTION_COMPLETE則返回,而不會再等待BR_REPLY消息的到來.

理解 Android 進程啟動之全過程

理解 Android 進程啟動之全過程

一. 概述 Android系統將進程做得很友好的封裝,對於上層app開發者來說進程幾乎是透明的. 了解Android的朋友,一定知道Android四大組件,但對於

一個簡單易用的 Android 導航欄TitleBar

一個簡單易用的 Android 導航欄TitleBar

一個簡單易用的導航欄TitleBar,可以輕松實現IOS導航欄的各種效果整個代碼全部集中在TitleBar.java中,所有控件都動態生成,動態布局。不需要引用任

Android 適配多種 ROM 的快捷方式

Android 適配多種 ROM 的快捷方式

快捷方式 應該來說 很多人都做過,我們就來看一下基本的快捷方式 是怎麼實現的,會有什麼問題? 首先 肯定要獲取權限: <!-- 添加快捷方式 -->

如何在 Android 應用中使用 FontAwesome 圖標

如何在 Android 應用中使用 FontAwesome 圖標

這篇教程中,我將向你演示如何在安卓項目中使用 FontAwesome 圖標集合。FontAwesome 可以節省許多時間,原因如下: 首先,你不需要擔心不同手機上