編輯:關於Android編程

上一篇文章Android進程間通信(IPC)機制Binder簡要介紹和學習計劃簡要介紹了Android系統進程間通信機制Binder的總體架構,它由Client、Server、Service Manager和驅動程序Binder四個組件構成。本文著重介紹組件Service Manager,它是整個Binder機制的守護進程,用來管理開發者創建的各種Server,並且向Client提供查詢Server遠程接口的功能。

既然Service Manager組件是用來管理Server並且向Client提供查詢Server遠程接口的功能,那麼,Service Manager就必然要和Server以及Client進行通信了。我們知道,Service Manger、Client和Server三者分別是運行在獨立的進程當中,這樣它們之間的通信也屬於進程間通信了,而且也是采用Binder機制進行進程間通信,因此,Service Manager在充當Binder機制的守護進程的角色的同時,也在充當Server的角色,然而,它是一種特殊的Server,下面我們將會看到它的特殊之處。

與Service Manager相關的源代碼較多,這裡不會完整去分析每一行代碼,主要是帶著Service Manager是如何成為整個Binder機制中的守護進程這條主線來一步一步地深入分析相關源代碼,包括從用戶空間到內核空間的相關源代碼。希望讀者在閱讀下面的內容之前,先閱讀一下前一篇文章提到的兩個參考資料Android深入淺出之Binder機制和Android Binder設計與實現,熟悉相關概念和數據結構,這有助於理解下面要分析的源代碼。

Service Manager在用戶空間的源代碼位於frameworks/base/cmds/servicemanager目錄下,主要是由binder.h、binder.c和service_manager.c三個文件組成。Service Manager的入口位於service_manager.c文件中的main函數:

int main(int argc, char **argv)

{

struct binder_state *bs;

void *svcmgr = BINDER_SERVICE_MANAGER;

bs = binder_open(128*1024);

if (binder_become_context_manager(bs)) {

LOGE("cannot become context manager (%s)\n", strerror(errno));

return -1;

}

svcmgr_handle = svcmgr;

binder_loop(bs, svcmgr_handler);

return 0;

}

main函數主要有三個功能:一是打開Binder設備文件;二是告訴Binder驅動程序自己是Binder上下文管理者,即我們前面所說的守護進程;三是進入一個無窮循環,充當Server的角色,等待Client的請求。進入這三個功能之間,先來看一下這裡用到的結構體binder_state、宏BINDER_SERVICE_MANAGER的定義:

struct binder_state定義在frameworks/base/cmds/servicemanager/binder.c文件中:

struct binder_state

{

int fd;

void *mapped;

unsigned mapsize;

};

fd是文件描述符,即表示打開的/dev/binder設備文件描述符;mapped是把設備文件/dev/binder映射到進程空間的起始地址;mapsize是上述內存映射空間的大小。

宏BINDER_SERVICE_MANAGER定義frameworks/base/cmds/servicemanager/binder.h文件中:

/* the one magic object */

#define BINDER_SERVICE_MANAGER ((void*) 0)

它表示Service Manager的句柄為0。Binder通信機制使用句柄來代表遠程接口,這個句柄的意義和Windows編程中用到的句柄是差不多的概念。前面說到,Service Manager在充當守護進程的同時,它充當Server的角色,當它作為遠程接口使用時,它的句柄值便為0,這就是它的特殊之處,其余的Server的遠程接口句柄值都是一個大於0 而且由Binder驅動程序自動進行分配的。

函數首先是執行打開Binder設備文件的操作binder_open,這個函數位於frameworks/base/cmds/servicemanager/binder.c文件中:

struct binder_state *binder_open(unsigned mapsize)

{

struct binder_state *bs;

bs = malloc(sizeof(*bs));

if (!bs) {

errno = ENOMEM;

return 0;

}

bs->fd = open("/dev/binder", O_RDWR);

if (bs->fd < 0) {

fprintf(stderr,"binder: cannot open device (%s)\n",

strerror(errno));

goto fail_open;

}

bs->mapsize = mapsize;

bs->mapped = mmap(NULL, mapsize, PROT_READ, MAP_PRIVATE, bs->fd, 0);

if (bs->mapped == MAP_FAILED) {

fprintf(stderr,"binder: cannot map device (%s)\n",

strerror(errno));

goto fail_map;

}

/* TODO: check version */

return bs;

fail_map:

close(bs->fd);

fail_open:

free(bs);

return 0;

}

通過文件操作函數open來打開/dev/binder設備文件。設備文件/dev/binder是在Binder驅動程序模塊初始化的時候創建的,我們先看一下這個設備文件的創建過程。進入到kernel/common/drivers/staging/android目錄中,打開binder.c文件,可以看到模塊初始化入口binder_init:

static struct file_operations binder_fops = {

.owner = THIS_MODULE,

.poll = binder_poll,

.unlocked_ioctl = binder_ioctl,

.mmap = binder_mmap,

.open = binder_open,

.flush = binder_flush,

.release = binder_release,

};

static struct miscdevice binder_miscdev = {

.minor = MISC_DYNAMIC_MINOR,

.name = "binder",

.fops = &binder_fops

};

static int __init binder_init(void)

{

int ret;

binder_proc_dir_entry_root = proc_mkdir("binder", NULL);

if (binder_proc_dir_entry_root)

binder_proc_dir_entry_proc = proc_mkdir("proc", binder_proc_dir_entry_root);

ret = misc_register(&binder_miscdev);

if (binder_proc_dir_entry_root) {

create_proc_read_entry("state", S_IRUGO, binder_proc_dir_entry_root, binder_read_proc_state, NULL);

create_proc_read_entry("stats", S_IRUGO, binder_proc_dir_entry_root, binder_read_proc_stats, NULL);

create_proc_read_entry("transactions", S_IRUGO, binder_proc_dir_entry_root, binder_read_proc_transactions, NULL);

create_proc_read_entry("transaction_log", S_IRUGO, binder_proc_dir_entry_root, binder_read_proc_transaction_log, &binder_transaction_log);

create_proc_read_entry("failed_transaction_log", S_IRUGO, binder_proc_dir_entry_root, binder_read_proc_transaction_log, &binder_transaction_log_failed);

}

return ret;

}

device_initcall(binder_init);

創建設備文件的地方在misc_register函數裡面,關於misc設備的注冊,我們在Android日志系統驅動程序Logger源代碼分析一文中有提到,有興趣的讀取不訪去了解一下。其余的邏輯主要是在/proc目錄創建各種Binder相關的文件,供用戶訪問。從設備文件的操作方法binder_fops可以看出,前面的binder_open函數執行語句:

bs->fd = open("/dev/binder", O_RDWR);

就進入到Binder驅動程序的binder_open函數了:

static int binder_open(struct inode *nodp, struct file *filp)

{

struct binder_proc *proc;

if (binder_debug_mask & BINDER_DEBUG_OPEN_CLOSE)

printk(KERN_INFO "binder_open: %d:%d\n", current->group_leader->pid, current->pid);

proc = kzalloc(sizeof(*proc), GFP_KERNEL);

if (proc == NULL)

return -ENOMEM;

get_task_struct(current);

proc->tsk = current;

INIT_LIST_HEAD(&proc->todo);

init_waitqueue_head(&proc->wait);

proc->default_priority = task_nice(current);

mutex_lock(&binder_lock);

binder_stats.obj_created[BINDER_STAT_PROC]++;

hlist_add_head(&proc->proc_node, &binder_procs);

proc->pid = current->group_leader->pid;

INIT_LIST_HEAD(&proc->delivered_death);

filp->private_data = proc;

mutex_unlock(&binder_lock);

if (binder_proc_dir_entry_proc) {

char strbuf[11];

snprintf(strbuf, sizeof(strbuf), "%u", proc->pid);

remove_proc_entry(strbuf, binder_proc_dir_entry_proc);

create_proc_read_entry(strbuf, S_IRUGO, binder_proc_dir_entry_proc, binder_read_proc_proc, proc);

}

return 0;

}

這個函數的主要作用是創建一個struct binder_proc數據結構來保存打開設備文件/dev/binder的進程的上下文信息,並且將這個進程上下文信息保存在打開文件結構struct file的私有數據成員變量private_data中,這樣,在執行其它文件操作時,就通過打開文件結構struct file來取回這個進程上下文信息了。這個進程上下文信息同時還會保存在一個全局哈希表binder_procs中,驅動程序內部使用。binder_procs定義在文件的開頭:

static HLIST_HEAD(binder_procs);

結構體struct binder_proc也是定義在kernel/common/drivers/staging/android/binder.c文件中:

struct binder_proc {

struct hlist_node proc_node;

struct rb_root threads;

struct rb_root nodes;

struct rb_root refs_by_desc;

struct rb_root refs_by_node;

int pid;

struct vm_area_struct *vma;

struct task_struct *tsk;

struct files_struct *files;

struct hlist_node deferred_work_node;

int deferred_work;

void *buffer;

ptrdiff_t user_buffer_offset;

struct list_head buffers;

struct rb_root free_buffers;

struct rb_root allocated_buffers;

size_t free_async_space;

struct page **pages;

size_t buffer_size;

uint32_t buffer_free;

struct list_head todo;

wait_queue_head_t wait;

struct binder_stats stats;

struct list_head delivered_death;

int max_threads;

int requested_threads;

int requested_threads_started;

int ready_threads;

long default_priority;

};

這個結構體的成員比較多,這裡就不一一說明了,簡單解釋一下四個成員變量threads、nodes、 refs_by_desc和refs_by_node,其它的我們在遇到的時候再詳細解釋。這四個成員變量都是表示紅黑樹的節點,也就是說,binder_proc分別掛會四個紅黑樹下。threads樹用來保存binder_proc進程內用於處理用戶請求的線程,它的最大數量由max_threads來決定;node樹成用來保存binder_proc進程內的Binder實體;refs_by_desc樹和refs_by_node樹用來保存binder_proc進程內的Binder引用,即引用的其它進程的Binder實體,它分別用兩種方式來組織紅黑樹,一種是以句柄作來key值來組織,一種是以引用的實體節點的地址值作來key值來組織,它們都是表示同一樣東西,只不過是為了內部查找方便而用兩個紅黑樹來表示。

這樣,打開設備文件/dev/binder的操作就完成了,接著是對打開的設備文件進行內存映射操作mmap:

bs->mapped = mmap(NULL, mapsize, PROT_READ, MAP_PRIVATE, bs->fd, 0);

對應Binder驅動程序的binder_mmap函數:

static int binder_mmap(struct file *filp, struct vm_area_struct *vma)

{

int ret;

struct vm_struct *area;

struct binder_proc *proc = filp->private_data;

const char *failure_string;

struct binder_buffer *buffer;

if ((vma->vm_end - vma->vm_start) > SZ_4M)

vma->vm_end = vma->vm_start + SZ_4M;

if (binder_debug_mask & BINDER_DEBUG_OPEN_CLOSE)

printk(KERN_INFO

"binder_mmap: %d %lx-%lx (%ld K) vma %lx pagep %lx\n",

proc->pid, vma->vm_start, vma->vm_end,

(vma->vm_end - vma->vm_start) / SZ_1K, vma->vm_flags,

(unsigned long)pgprot_val(vma->vm_page_prot));

if (vma->vm_flags & FORBIDDEN_MMAP_FLAGS) {

ret = -EPERM;

failure_string = "bad vm_flags";

goto err_bad_arg;

}

vma->vm_flags = (vma->vm_flags | VM_DONTCOPY) & ~VM_MAYWRITE;

if (proc->buffer) {

ret = -EBUSY;

failure_string = "already mapped";

goto err_already_mapped;

}

area = get_vm_area(vma->vm_end - vma->vm_start, VM_IOREMAP);

if (area == NULL) {

ret = -ENOMEM;

failure_string = "get_vm_area";

goto err_get_vm_area_failed;

}

proc->buffer = area->addr;

proc->user_buffer_offset = vma->vm_start - (uintptr_t)proc->buffer;

#ifdef CONFIG_CPU_CACHE_VIPT

if (cache_is_vipt_aliasing()) {

while (CACHE_COLOUR((vma->vm_start ^ (uint32_t)proc->buffer))) {

printk(KERN_INFO "binder_mmap: %d %lx-%lx maps %p bad alignment\n", proc->pid, vma->vm_start, vma->vm_end, proc->buffer);

vma->vm_start += PAGE_SIZE;

}

}

#endif

proc->pages = kzalloc(sizeof(proc->pages[0]) * ((vma->vm_end - vma->vm_start) / PAGE_SIZE), GFP_KERNEL);

if (proc->pages == NULL) {

ret = -ENOMEM;

failure_string = "alloc page array";

goto err_alloc_pages_failed;

}

proc->buffer_size = vma->vm_end - vma->vm_start;

vma->vm_ops = &binder_vm_ops;

vma->vm_private_data = proc;

if (binder_update_page_range(proc, 1, proc->buffer, proc->buffer + PAGE_SIZE, vma)) {

ret = -ENOMEM;

failure_string = "alloc small buf";

goto err_alloc_small_buf_failed;

}

buffer = proc->buffer;

INIT_LIST_HEAD(&proc->buffers);

list_add(&buffer->entry, &proc->buffers);

buffer->free = 1;

binder_insert_free_buffer(proc, buffer);

proc->free_async_space = proc->buffer_size / 2;

barrier();

proc->files = get_files_struct(current);

proc->vma = vma;

/*printk(KERN_INFO "binder_mmap: %d %lx-%lx maps %p\n", proc->pid, vma->vm_start, vma->vm_end, proc->buffer);*/

return 0;

err_alloc_small_buf_failed:

kfree(proc->pages);

proc->pages = NULL;

err_alloc_pages_failed:

vfree(proc->buffer);

proc->buffer = NULL;

err_get_vm_area_failed:

err_already_mapped:

err_bad_arg:

printk(KERN_ERR "binder_mmap: %d %lx-%lx %s failed %d\n", proc->pid, vma->vm_start, vma->vm_end, failure_string, ret);

return ret;

}

函數首先通過filp->private_data得到在打開設備文件/dev/binder時創建的struct binder_proc結構。內存映射信息放在vma參數中,注意,這裡的vma的數據類型是struct vm_area_struct,它表示的是一塊連續的虛擬地址空間區域,在函數變量聲明的地方,我們還看到有一個類似的結構體struct vm_struct,這個數據結構也是表示一塊連續的虛擬地址空間區域,那麼,這兩者的區別是什麼呢?在Linux中,struct vm_area_struct表示的虛擬地址是給進程使用的,而struct vm_struct表示的虛擬地址是給內核使用的,它們對應的物理頁面都可以是不連續的。struct vm_area_struct表示的地址空間范圍是0~3G,而struct vm_struct表示的地址空間范圍是(3G + 896M + 8M) ~ 4G。struct vm_struct表示的地址空間范圍為什麼不是3G~4G呢?原來,3G ~ (3G + 896M)范圍的地址是用來映射連續的物理頁面的,這個范圍的虛擬地址和對應的實際物理地址有著簡單的對應關系,即對應0~896M的物理地址空間,而(3G + 896M) ~ (3G + 896M + 8M)是安全保護區域(例如,所有指向這8M地址空間的指針都是非法的),因此struct vm_struct使用(3G + 896M + 8M) ~ 4G地址空間來映射非連續的物理頁面。有關Linux的內存管理知識,可以參考Android學習啟動篇一文提到的《Understanding the Linux Kernel》一書中的第8章。

這裡為什麼會同時使用進程虛擬地址空間和內核虛擬地址空間來映射同一個物理頁面呢?這就是Binder進程間通信機制的精髓所在了,同一個物理頁面,一方映射到進程虛擬地址空間,一方面映射到內核虛擬地址空間,這樣,進程和內核之間就可以減少一次內存拷貝了,提到了進程間通信效率。舉個例子如,Client要將一塊內存數據傳遞給Server,一般的做法是,Client將這塊數據從它的進程空間拷貝到內核空間中,然後內核再將這個數據從內核空間拷貝到Server的進程空間,這樣,Server就可以訪問這個數據了。但是在這種方法中,執行了兩次內存拷貝操作,而采用我們上面提到的方法,只需要把Client進程空間的數據拷貝一次到內核空間,然後Server與內核共享這個數據就可以了,整個過程只需要執行一次內存拷貝,提高了效率。

binder_mmap的原理講完了,這個函數的邏輯就好理解了。不過,這裡還是先要解釋一下struct binder_proc結構體的幾個成員變量。buffer成員變量是一個void*指針,它表示要映射的物理內存在內核空間中的起始位置;buffer_size成員變量是一個size_t類型的變量,表示要映射的內存的大小;pages成員變量是一個struct page*類型的數組,struct page是用來描述物理頁面的數據結構;user_buffer_offset成員變量是一個ptrdiff_t類型的變量,它表示的是內核使用的虛擬地址與進程使用的虛擬地址之間的差值,即如果某個物理頁面在內核空間中對應的虛擬地址是addr的話,那麼這個物理頁面在進程空間對應的虛擬地址就為addr + user_buffer_offset。

再解釋一下Binder驅動程序管理這個內存映射地址空間的方法,即是如何管理buffer ~ (buffer + buffer_size)這段地址空間的,這個地址空間被劃分為一段一段來管理,每一段是結構體struct binder_buffer來描述:

struct binder_buffer {

struct list_head entry; /* free and allocated entries by addesss */

struct rb_node rb_node; /* free entry by size or allocated entry */

/* by address */

unsigned free : 1;

unsigned allow_user_free : 1;

unsigned async_transaction : 1;

unsigned debug_id : 29;

struct binder_transaction *transaction;

struct binder_node *target_node;

size_t data_size;

size_t offsets_size;

uint8_t data[0];

};

每一個binder_buffer通過其成員entry按從低址到高地址連入到struct binder_proc中的buffers表示的鏈表中去,同時,每一個binder_buffer又分為正在使用的和空閒的,通過free成員變量來區分,空閒的binder_buffer通過成員變量rb_node連入到struct binder_proc中的free_buffers表示的紅黑樹中去,正在使用的binder_buffer通過成員變量rb_node連入到struct binder_proc中的allocated_buffers表示的紅黑樹中去。這樣做當然是為了方便查詢和維護這塊地址空間了,這一點我們可以從其它的代碼中看到,等遇到的時候我們再分析。

終於可以回到binder_mmap這個函數來了,首先是對參數作一些健康體檢(sanity check),例如,要映射的內存大小不能超過SIZE_4M,即4M,回到service_manager.c中的main 函數,這裡傳進來的值是128 * 1024個字節,即128K,這個檢查沒有問題。通過健康體檢後,調用get_vm_area函數獲得一個空閒的vm_struct區間,並初始化proc結構體的buffer、user_buffer_offset、pages和buffer_size和成員變量,接著調用binder_update_page_range來為虛擬地址空間proc->buffer ~ proc->buffer + PAGE_SIZE分配一個空閒的物理頁面,同時這段地址空間使用一個binder_buffer來描述,分別插入到proc->buffers鏈表和proc->free_buffers紅黑樹中去,最後,還初始化了proc結構體的free_async_space、files和vma三個成員變量。

這裡,我們繼續進入到binder_update_page_range函數中去看一下Binder驅動程序是如何實現把一個物理頁面同時映射到內核空間和進程空間去的:

static int binder_update_page_range(struct binder_proc *proc, int allocate,

void *start, void *end, struct vm_area_struct *vma)

{

void *page_addr;

unsigned long user_page_addr;

struct vm_struct tmp_area;

struct page **page;

struct mm_struct *mm;

if (binder_debug_mask & BINDER_DEBUG_BUFFER_ALLOC)

printk(KERN_INFO "binder: %d: %s pages %p-%p\n",

proc->pid, allocate ? "allocate" : "free", start, end);

if (end <= start)

return 0;

if (vma)

mm = NULL;

else

mm = get_task_mm(proc->tsk);

if (mm) {

down_write(&mm->mmap_sem);

vma = proc->vma;

}

if (allocate == 0)

goto free_range;

if (vma == NULL) {

printk(KERN_ERR "binder: %d: binder_alloc_buf failed to "

"map pages in userspace, no vma\n", proc->pid);

goto err_no_vma;

}

for (page_addr = start; page_addr < end; page_addr += PAGE_SIZE) {

int ret;

struct page **page_array_ptr;

page = &proc->pages[(page_addr - proc->buffer) / PAGE_SIZE];

BUG_ON(*page);

*page = alloc_page(GFP_KERNEL | __GFP_ZERO);

if (*page == NULL) {

printk(KERN_ERR "binder: %d: binder_alloc_buf failed "

"for page at %p\n", proc->pid, page_addr);

goto err_alloc_page_failed;

}

tmp_area.addr = page_addr;

tmp_area.size = PAGE_SIZE + PAGE_SIZE /* guard page? */;

page_array_ptr = page;

ret = map_vm_area(&tmp_area, PAGE_KERNEL, &page_array_ptr);

if (ret) {

printk(KERN_ERR "binder: %d: binder_alloc_buf failed "

"to map page at %p in kernel\n",

proc->pid, page_addr);

goto err_map_kernel_failed;

}

user_page_addr =

(uintptr_t)page_addr + proc->user_buffer_offset;

ret = vm_insert_page(vma, user_page_addr, page[0]);

if (ret) {

printk(KERN_ERR "binder: %d: binder_alloc_buf failed "

"to map page at %lx in userspace\n",

proc->pid, user_page_addr);

goto err_vm_insert_page_failed;

}

/* vm_insert_page does not seem to increment the refcount */

}

if (mm) {

up_write(&mm->mmap_sem);

mmput(mm);

}

return 0;

free_range:

for (page_addr = end - PAGE_SIZE; page_addr >= start;

page_addr -= PAGE_SIZE) {

page = &proc->pages[(page_addr - proc->buffer) / PAGE_SIZE];

if (vma)

zap_page_range(vma, (uintptr_t)page_addr +

proc->user_buffer_offset, PAGE_SIZE, NULL);

err_vm_insert_page_failed:

unmap_kernel_range((unsigned long)page_addr, PAGE_SIZE);

err_map_kernel_failed:

__free_page(*page);

*page = NULL;

err_alloc_page_failed:

;

}

err_no_vma:

if (mm) {

up_write(&mm->mmap_sem);

mmput(mm);

}

return -ENOMEM;

}

這個函數既可以分配物理頁面,也可以用來釋放物理頁面,通過allocate參數來區別,這裡我們只關注分配物理頁面的情況。要分配物理頁面的虛擬地址空間范圍為(start ~ end),函數前面的一些檢查邏輯就不看了,直接看中間的for循環:

for (page_addr = start; page_addr < end; page_addr += PAGE_SIZE) {

int ret;

struct page **page_array_ptr;

page = &proc->pages[(page_addr - proc->buffer) / PAGE_SIZE];

BUG_ON(*page);

*page = alloc_page(GFP_KERNEL | __GFP_ZERO);

if (*page == NULL) {

printk(KERN_ERR "binder: %d: binder_alloc_buf failed "

"for page at %p\n", proc->pid, page_addr);

goto err_alloc_page_failed;

}

tmp_area.addr = page_addr;

tmp_area.size = PAGE_SIZE + PAGE_SIZE /* guard page? */;

page_array_ptr = page;

ret = map_vm_area(&tmp_area, PAGE_KERNEL, &page_array_ptr);

if (ret) {

printk(KERN_ERR "binder: %d: binder_alloc_buf failed "

"to map page at %p in kernel\n",

proc->pid, page_addr);

goto err_map_kernel_failed;

}

user_page_addr =

(uintptr_t)page_addr + proc->user_buffer_offset;

ret = vm_insert_page(vma, user_page_addr, page[0]);

if (ret) {

printk(KERN_ERR "binder: %d: binder_alloc_buf failed "

"to map page at %lx in userspace\n",

proc->pid, user_page_addr);

goto err_vm_insert_page_failed;

}

/* vm_insert_page does not seem to increment the refcount */

}

首先是調用alloc_page來分配一個物理頁面,這個函數返回一個struct page物理頁面描述符,根據這個描述的內容初始化好struct vm_struct tmp_area結構體,然後通過map_vm_area將這個物理頁面插入到tmp_area描述的內核空間去,接著通過page_addr + proc->user_buffer_offset獲得進程虛擬空間地址,並通過vm_insert_page函數將這個物理頁面插入到進程地址空間去,參數vma代表了要插入的進程的地址空間。

這樣,frameworks/base/cmds/servicemanager/binder.c文件中的binder_open函數就描述完了,回到frameworks/base/cmds/servicemanager/service_manager.c文件中的main函數,下一步就是調用binder_become_context_manager來通知Binder驅動程序自己是Binder機制的上下文管理者,即守護進程。binder_become_context_manager函數位於frameworks/base/cmds/servicemanager/binder.c文件中:

int binder_become_context_manager(struct binder_state *bs)

{

return ioctl(bs->fd, BINDER_SET_CONTEXT_MGR, 0);

}

這裡通過調用ioctl文件操作函數來通知Binder驅動程序自己是守護進程,命令號是BINDER_SET_CONTEXT_MGR,沒有參數。BINDER_SET_CONTEXT_MGR定義為:

#define BINDER_SET_CONTEXT_MGR _IOW('b', 7, int)

這樣就進入到Binder驅動程序的binder_ioctl函數,我們只關注BINDER_SET_CONTEXT_MGR命令:

static long binder_ioctl(struct file *filp, unsigned int cmd, unsigned long arg)

{

int ret;

struct binder_proc *proc = filp->private_data;

struct binder_thread *thread;

unsigned int size = _IOC_SIZE(cmd);

void __user *ubuf = (void __user *)arg;

/*printk(KERN_INFO "binder_ioctl: %d:%d %x %lx\n", proc->pid, current->pid, cmd, arg);*/

ret = wait_event_interruptible(binder_user_error_wait, binder_stop_on_user_error < 2);

if (ret)

return ret;

mutex_lock(&binder_lock);

thread = binder_get_thread(proc);

if (thread == NULL) {

ret = -ENOMEM;

goto err;

}

switch (cmd) {

......

case BINDER_SET_CONTEXT_MGR:

if (binder_context_mgr_node != NULL) {

printk(KERN_ERR "binder: BINDER_SET_CONTEXT_MGR already set\n");

ret = -EBUSY;

goto err;

}

if (binder_context_mgr_uid != -1) {

if (binder_context_mgr_uid != current->cred->euid) {

printk(KERN_ERR "binder: BINDER_SET_"

"CONTEXT_MGR bad uid %d != %d\n",

current->cred->euid,

binder_context_mgr_uid);

ret = -EPERM;

goto err;

}

} else

binder_context_mgr_uid = current->cred->euid;

binder_context_mgr_node = binder_new_node(proc, NULL, NULL);

if (binder_context_mgr_node == NULL) {

ret = -ENOMEM;

goto err;

}

binder_context_mgr_node->local_weak_refs++;

binder_context_mgr_node->local_strong_refs++;

binder_context_mgr_node->has_strong_ref = 1;

binder_context_mgr_node->has_weak_ref = 1;

break;

......

default:

ret = -EINVAL;

goto err;

}

ret = 0;

err:

if (thread)

thread->looper &= ~BINDER_LOOPER_STATE_NEED_RETURN;

mutex_unlock(&binder_lock);

wait_event_interruptible(binder_user_error_wait, binder_stop_on_user_error < 2);

if (ret && ret != -ERESTARTSYS)

printk(KERN_INFO "binder: %d:%d ioctl %x %lx returned %d\n", proc->pid, current->pid, cmd, arg, ret);

return ret;

}

繼續分析這個函數之前,又要解釋兩個數據結構了,一個是struct binder_thread結構體,顧名思久,它表示一個線程,這裡就是執行binder_become_context_manager函數的線程了。

struct binder_thread {

struct binder_proc *proc;

struct rb_node rb_node;

int pid;

int looper;

struct binder_transaction *transaction_stack;

struct list_head todo;

uint32_t return_error; /* Write failed, return error code in read buf */

uint32_t return_error2; /* Write failed, return error code in read */

/* buffer. Used when sending a reply to a dead process that */

/* we are also waiting on */

wait_queue_head_t wait;

struct binder_stats stats;

};

proc表示這個線程所屬的進程。struct binder_proc有一個成員變量threads,它的類型是rb_root,它表示一查紅黑樹,把屬於這個進程的所有線程都組織起來,struct binder_thread的成員變量rb_node就是用來鏈入這棵紅黑樹的節點了。looper成員變量表示線程的狀態,它可以取下面這幾個值:

enum {

BINDER_LOOPER_STATE_REGISTERED = 0x01,

BINDER_LOOPER_STATE_ENTERED = 0x02,

BINDER_LOOPER_STATE_EXITED = 0x04,

BINDER_LOOPER_STATE_INVALID = 0x08,

BINDER_LOOPER_STATE_WAITING = 0x10,

BINDER_LOOPER_STATE_NEED_RETURN = 0x20

};

其余的成員變量,transaction_stack表示線程正在處理的事務,todo表示發往該線程的數據列表,return_error和return_error2表示操作結果返回碼,wait用來阻塞線程等待某個事件的發生,stats用來保存一些統計信息。這些成員變量遇到的時候再分析它們的作用。

另外一個數據結構是struct binder_node,它表示一個binder實體:

struct binder_node {

int debug_id;

struct binder_work work;

union {

struct rb_node rb_node;

struct hlist_node dead_node;

};

struct binder_proc *proc;

struct hlist_head refs;

int internal_strong_refs;

int local_weak_refs;

int local_strong_refs;

void __user *ptr;

void __user *cookie;

unsigned has_strong_ref : 1;

unsigned pending_strong_ref : 1;

unsigned has_weak_ref : 1;

unsigned pending_weak_ref : 1;

unsigned has_async_transaction : 1;

unsigned accept_fds : 1;

int min_priority : 8;

struct list_head async_todo;

};

rb_node和dead_node組成一個聯合體。 如果這個Binder實體還在正常使用,則使用rb_node來連入proc->nodes所表示的紅黑樹的節點,這棵紅黑樹用來組織屬於這個進程的所有Binder實體;如果這個Binder實體所屬的進程已經銷毀,而這個Binder實體又被其它進程所引用,則這個Binder實體通過dead_node進入到一個哈希表中去存放。proc成員變量就是表示這個Binder實例所屬於進程了。refs成員變量把所有引用了該Binder實體的Binder引用連接起來構成一個鏈表。internal_strong_refs、local_weak_refs和local_strong_refs表示這個Binder實體的引用計數。ptr和cookie成員變量分別表示這個Binder實體在用戶空間的地址以及附加數據。其余的成員變量就不描述了,遇到的時候再分析。

現在回到binder_ioctl函數中,首先是通過filp->private_data獲得proc變量,這裡binder_mmap函數是一樣的。接著通過binder_get_thread函數獲得線程信息,我們來看一下這個函數:

static struct binder_thread *binder_get_thread(struct binder_proc *proc)

{

struct binder_thread *thread = NULL;

struct rb_node *parent = NULL;

struct rb_node **p = &proc->threads.rb_node;

while (*p) {

parent = *p;

thread = rb_entry(parent, struct binder_thread, rb_node);

if (current->pid < thread->pid)

p = &(*p)->rb_left;

else if (current->pid > thread->pid)

p = &(*p)->rb_right;

else

break;

}

if (*p == NULL) {

thread = kzalloc(sizeof(*thread), GFP_KERNEL);

if (thread == NULL)

return NULL;

binder_stats.obj_created[BINDER_STAT_THREAD]++;

thread->proc = proc;

thread->pid = current->pid;

init_waitqueue_head(&thread->wait);

INIT_LIST_HEAD(&thread->todo);

rb_link_node(&thread->rb_node, parent, p);

rb_insert_color(&thread->rb_node, &proc->threads);

thread->looper |= BINDER_LOOPER_STATE_NEED_RETURN;

thread->return_error = BR_OK;

thread->return_error2 = BR_OK;

}

return thread;

}

這裡把當前線程current的pid作為鍵值,在進程proc->threads表示的紅黑樹中進行查找,看是否已經為當前線程創建過了binder_thread信息。在這個場景下,由於當前線程是第一次進到這裡,所以肯定找不到,即*p == NULL成立,於是,就為當前線程創建一個線程上下文信息結構體binder_thread,並初始化相應成員變量,並插入到proc->threads所表示的紅黑樹中去,下次要使用時就可以從proc中找到了。注意,這裡的thread->looper = BINDER_LOOPER_STATE_NEED_RETURN。

回到binder_ioctl函數,繼續往下面,有兩個全局變量binder_context_mgr_node和binder_context_mgr_uid,它定義如下:

static struct binder_node *binder_context_mgr_node;

static uid_t binder_context_mgr_uid = -1;

binder_context_mgr_node用來表示Service Manager實體,binder_context_mgr_uid表示Service Manager守護進程的uid。在這個場景下,由於當前線程是第一次進到這裡,所以binder_context_mgr_node為NULL,binder_context_mgr_uid為-1,於是初始化binder_context_mgr_uid為current->cred->euid,這樣,當前線程就成為Binder機制的守護進程了,並且通過binder_new_node為Service Manager創建Binder實體:

static struct binder_node *

binder_new_node(struct binder_proc *proc, void __user *ptr, void __user *cookie)

{

struct rb_node **p = &proc->nodes.rb_node;

struct rb_node *parent = NULL;

struct binder_node *node;

while (*p) {

parent = *p;

node = rb_entry(parent, struct binder_node, rb_node);

if (ptr < node->ptr)

p = &(*p)->rb_left;

else if (ptr > node->ptr)

p = &(*p)->rb_right;

else

return NULL;

}

node = kzalloc(sizeof(*node), GFP_KERNEL);

if (node == NULL)

return NULL;

binder_stats.obj_created[BINDER_STAT_NODE]++;

rb_link_node(&node->rb_node, parent, p);

rb_insert_color(&node->rb_node, &proc->nodes);

node->debug_id = ++binder_last_id;

node->proc = proc;

node->ptr = ptr;

node->cookie = cookie;

node->work.type = BINDER_WORK_NODE;

INIT_LIST_HEAD(&node->work.entry);

INIT_LIST_HEAD(&node->async_todo);

if (binder_debug_mask & BINDER_DEBUG_INTERNAL_REFS)

printk(KERN_INFO "binder: %d:%d node %d u%p c%p created\n",

proc->pid, current->pid, node->debug_id,

node->ptr, node->cookie);

return node;

}

注意,這裡傳進來的ptr和cookie均為NULL。函數首先檢查proc->nodes紅黑樹中是否已經存在以ptr為鍵值的node,如果已經存在,就返回NULL。在這個場景下,由於當前線程是第一次進入到這裡,所以肯定不存在,於是就新建了一個ptr為NULL的binder_node,並且初始化其它成員變量,並插入到proc->nodes紅黑樹中去。

binder_new_node返回到binder_ioctl函數後,就把新建的binder_node指針保存在binder_context_mgr_node中了,緊接著,又初始化了binder_context_mgr_node的引用計數值。

這樣,BINDER_SET_CONTEXT_MGR命令就執行完畢了,binder_ioctl函數返回之前,執行了下面語句:

if (thread)

thread->looper &= ~BINDER_LOOPER_STATE_NEED_RETURN;

回憶上面執行binder_get_thread時,thread->looper = BINDER_LOOPER_STATE_NEED_RETURN,執行了這條語句後,thread->looper = 0。

回到frameworks/base/cmds/servicemanager/service_manager.c文件中的main函數,下一步就是調用binder_loop函數進入循環,等待Client來請求了。binder_loop函數定義在frameworks/base/cmds/servicemanager/binder.c文件中:

void binder_loop(struct binder_state *bs, binder_handler func)

{

int res;

struct binder_write_read bwr;

unsigned readbuf[32];

bwr.write_size = 0;

bwr.write_consumed = 0;

bwr.write_buffer = 0;

readbuf[0] = BC_ENTER_LOOPER;

binder_write(bs, readbuf, sizeof(unsigned));

for (;;) {

bwr.read_size = sizeof(readbuf);

bwr.read_consumed = 0;

bwr.read_buffer = (unsigned) readbuf;

res = ioctl(bs->fd, BINDER_WRITE_READ, &bwr);

if (res < 0) {

LOGE("binder_loop: ioctl failed (%s)\n", strerror(errno));

break;

}

res = binder_parse(bs, 0, readbuf, bwr.read_consumed, func);

if (res == 0) {

LOGE("binder_loop: unexpected reply?!\n");

break;

}

if (res < 0) {

LOGE("binder_loop: io error %d %s\n", res, strerror(errno));

break;

}

}

}

首先是通過binder_write函數執行BC_ENTER_LOOPER命令告訴Binder驅動程序, Service Manager要進入循環了。

這裡又要介紹一下設備文件/dev/binder文件操作函數ioctl的操作碼BINDER_WRITE_READ了,首先看定義:

#define BINDER_WRITE_READ _IOWR('b', 1, struct binder_write_read)

這個io操作碼有一個參數,形式為struct binder_write_read:

struct binder_write_read {

signed long write_size; /* bytes to write */

signed long write_consumed; /* bytes consumed by driver */

unsigned long write_buffer;

signed long read_size; /* bytes to read */

signed long read_consumed; /* bytes consumed by driver */

unsigned long read_buffer;

};

這裡順便說一下,用戶空間程序和Binder驅動程序交互大多數都是通過BINDER_WRITE_READ命令的,write_bufffer和read_buffer所指向的數據結構還指定了具體要執行的操作,write_bufffer和read_buffer所指向的結構體是struct binder_transaction_data:

struct binder_transaction_data {

/* The first two are only used for bcTRANSACTION and brTRANSACTION,

* identifying the target and contents of the transaction.

*/

union {

size_t handle; /* target descriptor of command transaction */

void *ptr; /* target descriptor of return transaction */

} target;

void *cookie; /* target object cookie */

unsigned int code; /* transaction command */

/* General information about the transaction. */

unsigned int flags;

pid_t sender_pid;

uid_t sender_euid;

size_t data_size; /* number of bytes of data */

size_t offsets_size; /* number of bytes of offsets */

/* If this transaction is inline, the data immediately

* follows here; otherwise, it ends with a pointer to

* the data buffer.

*/

union {

struct {

/* transaction data */

const void *buffer;

/* offsets from buffer to flat_binder_object structs */

const void *offsets;

} ptr;

uint8_t buf[8];

} data;

};

有一個聯合體target,當這個BINDER_WRITE_READ命令的目標對象是本地Binder實體時,就使用ptr來表示這個對象在本進程中的地址,否則就使用handle來表示這個Binder實體的引用。只有目標對象是Binder實體時,cookie成員變量才有意義,表示一些附加數據,由Binder實體來解釋這個個附加數據。code表示要對目標對象請求的命令代碼,有很多請求代碼,這裡就不列舉了,在這個場景中,就是BC_ENTER_LOOPER了,用來告訴Binder驅動程序, Service Manager要進入循環了。其余的請求命令代碼可以參考kernel/common/drivers/staging/android/binder.h文件中定義的兩個枚舉類型BinderDriverReturnProtocol和BinderDriverCommandProtocol。

flags成員變量表示事務標志:

enum transaction_flags {

TF_ONE_WAY = 0x01, /* this is a one-way call: async, no return */

TF_ROOT_OBJECT = 0x04, /* contents are the component's root object */

TF_STATUS_CODE = 0x08, /* contents are a 32-bit status code */

TF_ACCEPT_FDS = 0x10, /* allow replies with file descriptors */

};

每一個標志位所表示的意義看注釋就行了,遇到時再具體分析。

sender_pid和sender_euid表示發送者進程的pid和euid。

data_size表示data.buffer緩沖區的大小,offsets_size表示data.offsets緩沖區的大小。這裡需要解釋一下data成員變量,命令的真正要傳輸的數據就保存在data.buffer緩沖區中,前面的一成員變量都是一些用來描述數據的特征的。data.buffer所表示的緩沖區數據分為兩類,一類是普通數據,Binder驅動程序不關心,一類是Binder實體或者Binder引用,這需要Binder驅動程序介入處理。為什麼呢?想想,如果一個進程A傳遞了一個Binder實體或Binder引用給進程B,那麼,Binder驅動程序就需要介入維護這個Binder實體或者引用的引用計數,防止B進程還在使用這個Binder實體時,A卻銷毀這個實體,這樣的話,B進程就會crash了。所以在傳輸數據時,如果數據中含有Binder實體和Binder引和,就需要告訴Binder驅動程序它們的具體位置,以便Binder驅動程序能夠去維護它們。data.offsets的作用就在這裡了,它指定在data.buffer緩沖區中,所有Binder實體或者引用的偏移位置。每一個Binder實體或者引用,通過struct flat_binder_object 來表示:

/*

* This is the flattened representation of a Binder object for transfer

* between processes. The 'offsets' supplied as part of a binder transaction

* contains offsets into the data where these structures occur. The Binder

* driver takes care of re-writing the structure type and data as it moves

* between processes.

*/

struct flat_binder_object {

/* 8 bytes for large_flat_header. */

unsigned long type;

unsigned long flags;

/* 8 bytes of data. */

union {

void *binder; /* local object */

signed long handle; /* remote object */

};

/* extra data associated with local object */

void *cookie;

};

type表示Binder對象的類型,它取值如下所示:

enum {

BINDER_TYPE_BINDER = B_PACK_CHARS('s', 'b', '*', B_TYPE_LARGE),

BINDER_TYPE_WEAK_BINDER = B_PACK_CHARS('w', 'b', '*', B_TYPE_LARGE),

BINDER_TYPE_HANDLE = B_PACK_CHARS('s', 'h', '*', B_TYPE_LARGE),

BINDER_TYPE_WEAK_HANDLE = B_PACK_CHARS('w', 'h', '*', B_TYPE_LARGE),

BINDER_TYPE_FD = B_PACK_CHARS('f', 'd', '*', B_TYPE_LARGE),

};

flags表示Binder對象的標志,該域只對第一次傳遞Binder實體時有效,因為此刻驅動需要在內核中創建相應的實體節點,有些參數需要從該域取出。

type和flags的具體意義可以參考Android Binder設計與實現一文。

最後,binder表示這是一個Binder實體,handle表示這是一個Binder引用,當這是一個Binder實體時,cookie才有意義,表示附加數據,由進程自己解釋。

數據結構分析完了,回到binder_loop函數中,首先是執行BC_ENTER_LOOPER命令:

readbuf[0] = BC_ENTER_LOOPER;

binder_write(bs, readbuf, sizeof(unsigned));

進入到binder_write函數中:

int binder_write(struct binder_state *bs, void *data, unsigned len)

{

struct binder_write_read bwr;

int res;

bwr.write_size = len;

bwr.write_consumed = 0;

bwr.write_buffer = (unsigned) data;

bwr.read_size = 0;

bwr.read_consumed = 0;

bwr.read_buffer = 0;

res = ioctl(bs->fd, BINDER_WRITE_READ, &bwr);

if (res < 0) {

fprintf(stderr,"binder_write: ioctl failed (%s)\n",

strerror(errno));

}

return res;

}

注意這裡的binder_write_read變量bwr,write_size大小為4,表示write_buffer緩沖區大小為4,它的內容是一個BC_ENTER_LOOPER命令協議號,read_buffer為空。接著又是調用ioctl函數進入到Binder驅動程序的binder_ioctl函數,這裡我們也只是關注BC_ENTER_LOOPER相關的邏輯:

static long binder_ioctl(struct file *filp, unsigned int cmd, unsigned long arg)

{

int ret;

struct binder_proc *proc = filp->private_data;

struct binder_thread *thread;

unsigned int size = _IOC_SIZE(cmd);

void __user *ubuf = (void __user *)arg;

/*printk(KERN_INFO "binder_ioctl: %d:%d %x %lx\n", proc->pid, current->pid, cmd, arg);*/

ret = wait_event_interruptible(binder_user_error_wait, binder_stop_on_user_error < 2);

if (ret)

return ret;

mutex_lock(&binder_lock);

thread = binder_get_thread(proc);

if (thread == NULL) {

ret = -ENOMEM;

goto err;

}

switch (cmd) {

case BINDER_WRITE_READ: {

struct binder_write_read bwr;

if (size != sizeof(struct binder_write_read)) {

ret = -EINVAL;

goto err;

}

if (copy_from_user(&bwr, ubuf, sizeof(bwr))) {

ret = -EFAULT;

goto err;

}

if (binder_debug_mask & BINDER_DEBUG_READ_WRITE)

printk(KERN_INFO "binder: %d:%d write %ld at %08lx, read %ld at %08lx\n",

proc->pid, thread->pid, bwr.write_size, bwr.write_buffer, bwr.read_size, bwr.read_buffer);

if (bwr.write_size > 0) {

ret = binder_thread_write(proc, thread, (void __user *)bwr.write_buffer, bwr.write_size, &bwr.write_consumed);

if (ret < 0) {

bwr.read_consumed = 0;

if (copy_to_user(ubuf, &bwr, sizeof(bwr)))

ret = -EFAULT;

goto err;

}

}

if (bwr.read_size > 0) {

ret = binder_thread_read(proc, thread, (void __user *)bwr.read_buffer, bwr.read_size, &bwr.read_consumed, filp->f_flags & O_NONBLOCK);

if (!list_empty(&proc->todo))

wake_up_interruptible(&proc->wait);

if (ret < 0) {

if (copy_to_user(ubuf, &bwr, sizeof(bwr)))

ret = -EFAULT;

goto err;

}

}

if (binder_debug_mask & BINDER_DEBUG_READ_WRITE)

printk(KERN_INFO "binder: %d:%d wrote %ld of %ld, read return %ld of %ld\n",

proc->pid, thread->pid, bwr.write_consumed, bwr.write_size, bwr.read_consumed, bwr.read_size);

if (copy_to_user(ubuf, &bwr, sizeof(bwr))) {

ret = -EFAULT;

goto err;

}

break;

}

......

default:

ret = -EINVAL;

goto err;

}

ret = 0;

err:

if (thread)

thread->looper &= ~BINDER_LOOPER_STATE_NEED_RETURN;

mutex_unlock(&binder_lock);

wait_event_interruptible(binder_user_error_wait, binder_stop_on_user_error < 2);

if (ret && ret != -ERESTARTSYS)

printk(KERN_INFO "binder: %d:%d ioctl %x %lx returned %d\n", proc->pid, current->pid, cmd, arg, ret);

return ret;

}

函數前面的代碼就不解釋了,同前面調用binder_become_context_manager是一樣的,只不過這裡調用binder_get_thread函數獲取binder_thread,就能從proc中直接找到了,不需要創建一個新的。

首先是通過copy_from_user(&bwr, ubuf, sizeof(bwr))語句把用戶傳遞進來的參數轉換成struct binder_write_read結構體,並保存在本地變量bwr中,這裡可以看出bwr.write_size等於4,於是進入binder_thread_write函數,這裡我們只關注BC_ENTER_LOOPER相關的代碼:

int

binder_thread_write(struct binder_proc *proc, struct binder_thread *thread,

void __user *buffer, int size, signed long *consumed)

{

uint32_t cmd;

void __user *ptr = buffer + *consumed;

void __user *end = buffer + size;

while (ptr < end && thread->return_error == BR_OK) {

if (get_user(cmd, (uint32_t __user *)ptr))

return -EFAULT;

ptr += sizeof(uint32_t);

if (_IOC_NR(cmd) < ARRAY_SIZE(binder_stats.bc)) {

binder_stats.bc[_IOC_NR(cmd)]++;

proc->stats.bc[_IOC_NR(cmd)]++;

thread->stats.bc[_IOC_NR(cmd)]++;

}

switch (cmd) {

......

case BC_ENTER_LOOPER:

if (binder_debug_mask & BINDER_DEBUG_THREADS)

printk(KERN_INFO "binder: %d:%d BC_ENTER_LOOPER\n",

proc->pid, thread->pid);

if (thread->looper & BINDER_LOOPER_STATE_REGISTERED) {

thread->looper |= BINDER_LOOPER_STATE_INVALID;

binder_user_error("binder: %d:%d ERROR:"

" BC_ENTER_LOOPER called after "

"BC_REGISTER_LOOPER\n",

proc->pid, thread->pid);

}

thread->looper |= BINDER_LOOPER_STATE_ENTERED;

break;

......

default:

printk(KERN_ERR "binder: %d:%d unknown command %d\n", proc->pid, thread->pid, cmd);

return -EINVAL;

}

*consumed = ptr - buffer;

}

return 0;

}

回憶前面執行binder_become_context_manager到binder_ioctl時,調用binder_get_thread函數創建的thread->looper值為0,所以這裡執行完BC_ENTER_LOOPER時,thread->looper值就變為BINDER_LOOPER_STATE_ENTERED了,表明當前線程進入循環狀態了。

回到binder_ioctl函數,由於bwr.read_size == 0,binder_thread_read函數就不會被執行了,這樣,binder_ioctl的任務就完成了。

回到binder_loop函數,進入for循環:

for (;;) {

bwr.read_size = sizeof(readbuf);

bwr.read_consumed = 0;

bwr.read_buffer = (unsigned) readbuf;

res = ioctl(bs->fd, BINDER_WRITE_READ, &bwr);

if (res < 0) {

LOGE("binder_loop: ioctl failed (%s)\n", strerror(errno));

break;

}

res = binder_parse(bs, 0, readbuf, bwr.read_consumed, func);

if (res == 0) {

LOGE("binder_loop: unexpected reply?!\n");

break;

}

if (res < 0) {

LOGE("binder_loop: io error %d %s\n", res, strerror(errno));

break;

}

}

又是執行一個ioctl命令,注意,這裡的bwr參數各個成員的值:

bwr.write_size = 0; bwr.write_consumed = 0; bwr.write_buffer = 0; readbuf[0] = BC_ENTER_LOOPER; bwr.read_size = sizeof(readbuf); bwr.read_consumed = 0; bwr.read_buffer = (unsigned) readbuf;

再次進入到binder_ioctl函數:

static long binder_ioctl(struct file *filp, unsigned int cmd, unsigned long arg)

{

int ret;

struct binder_proc *proc = filp->private_data;

struct binder_thread *thread;

unsigned int size = _IOC_SIZE(cmd);

void __user *ubuf = (void __user *)arg;

/*printk(KERN_INFO "binder_ioctl: %d:%d %x %lx\n", proc->pid, current->pid, cmd, arg);*/

ret = wait_event_interruptible(binder_user_error_wait, binder_stop_on_user_error < 2);

if (ret)

return ret;

mutex_lock(&binder_lock);

thread = binder_get_thread(proc);

if (thread == NULL) {

ret = -ENOMEM;

goto err;

}

switch (cmd) {

case BINDER_WRITE_READ: {

struct binder_write_read bwr;

if (size != sizeof(struct binder_write_read)) {

ret = -EINVAL;

goto err;

}

if (copy_from_user(&bwr, ubuf, sizeof(bwr))) {

ret = -EFAULT;

goto err;

}

if (binder_debug_mask & BINDER_DEBUG_READ_WRITE)

printk(KERN_INFO "binder: %d:%d write %ld at %08lx, read %ld at %08lx\n",

proc->pid, thread->pid, bwr.write_size, bwr.write_buffer, bwr.read_size, bwr.read_buffer);

if (bwr.write_size > 0) {

ret = binder_thread_write(proc, thread, (void __user *)bwr.write_buffer, bwr.write_size, &bwr.write_consumed);

if (ret < 0) {

bwr.read_consumed = 0;

if (copy_to_user(ubuf, &bwr, sizeof(bwr)))

ret = -EFAULT;

goto err;

}

}

if (bwr.read_size > 0) {

ret = binder_thread_read(proc, thread, (void __user *)bwr.read_buffer, bwr.read_size, &bwr.read_consumed, filp->f_flags & O_NONBLOCK);

if (!list_empty(&proc->todo))

wake_up_interruptible(&proc->wait);

if (ret < 0) {

if (copy_to_user(ubuf, &bwr, sizeof(bwr)))

ret = -EFAULT;

goto err;

}

}

if (binder_debug_mask & BINDER_DEBUG_READ_WRITE)

printk(KERN_INFO "binder: %d:%d wrote %ld of %ld, read return %ld of %ld\n",

proc->pid, thread->pid, bwr.write_consumed, bwr.write_size, bwr.read_consumed, bwr.read_size);

if (copy_to_user(ubuf, &bwr, sizeof(bwr))) {

ret = -EFAULT;

goto err;

}

break;

}

......

default:

ret = -EINVAL;

goto err;

}

ret = 0;

err:

if (thread)

thread->looper &= ~BINDER_LOOPER_STATE_NEED_RETURN;

mutex_unlock(&binder_lock);

wait_event_interruptible(binder_user_error_wait, binder_stop_on_user_error < 2);

if (ret && ret != -ERESTARTSYS)

printk(KERN_INFO "binder: %d:%d ioctl %x %lx returned %d\n", proc->pid, current->pid, cmd, arg, ret);

return ret;

}

這次,bwr.write_size等於0,於是不會執行binder_thread_write函數,bwr.read_size等於32,於是進入到binder_thread_read函數:

[cpp] view plain copy 在CODE上查看代碼片派生到我的代碼片

static int

binder_thread_read(struct binder_proc *proc, struct binder_thread *thread,

void __user *buffer, int size, signed long *consumed, int non_block)

{

void __user *ptr = buffer + *consumed;

void __user *end = buffer + size;

int ret = 0;

int wait_for_proc_work;

if (*consumed == 0) {

if (put_user(BR_NOOP, (uint32_t __user *)ptr))

return -EFAULT;

ptr += sizeof(uint32_t);

}

retry:

wait_for_proc_work = thread->transaction_stack == NULL && list_empty(&thread->todo);

if (thread->return_error != BR_OK && ptr < end) {

if (thread->return_error2 != BR_OK) {

if (put_user(thread->return_error2, (uint32_t __user *)ptr))

return -EFAULT;

ptr += sizeof(uint32_t);

if (ptr == end)

goto done;

thread->return_error2 = BR_OK;

}

if (put_user(thread->return_error, (uint32_t __user *)ptr))

return -EFAULT;

ptr += sizeof(uint32_t);

thread->return_error = BR_OK;

goto done;

}

thread->looper |= BINDER_LOOPER_STATE_WAITING;

if (wait_for_proc_work)

proc->ready_threads++;

mutex_unlock(&binder_lock);

if (wait_for_proc_work) {

if (!(thread->looper & (BINDER_LOOPER_STATE_REGISTERED |

BINDER_LOOPER_STATE_ENTERED))) {

binder_user_error("binder: %d:%d ERROR: Thread waiting "

"for process work before calling BC_REGISTER_"

"LOOPER or BC_ENTER_LOOPER (state %x)\n",

proc->pid, thread->pid, thread->looper);

wait_event_interruptible(binder_user_error_wait, binder_stop_on_user_error < 2);

}

binder_set_nice(proc->default_priority);

if (non_block) {

if (!binder_has_proc_work(proc, thread))

ret = -EAGAIN;

} else

ret = wait_event_interruptible_exclusive(proc->wait, binder_has_proc_work(proc, thread));

} else {

if (non_block) {

if (!binder_has_thread_work(thread))

ret = -EAGAIN;

} else

ret = wait_event_interruptible(thread->wait, binder_has_thread_work(thread));

}

.......

}

傳入的參數*consumed == 0,於是寫入一個值BR_NOOP到參數ptr指向的緩沖區中去,即用戶傳進來的bwr.read_buffer緩沖區。這時候,thread->transaction_stack == NULL,並且thread->todo列表也是空的,這表示當前線程沒有事務需要處理,於是wait_for_proc_work為true,表示要去查看proc是否有未處理的事務。當前thread->return_error == BR_OK,這是前面創建binder_thread時初始化設置的。於是繼續往下執行,設置thread的狀態為BINDER_LOOPER_STATE_WAITING,表示線程處於等待狀態。調用binder_set_nice函數設置當前線程的優先級別為proc->default_priority,這是因為thread要去處理屬於proc的事務,因此要將此thread的優先級別設置和proc一樣。在這個場景中,proc也沒有事務處理,即binder_has_proc_work(proc, thread)為false。如果文件打開模式為非阻塞模式,即non_block為true,那麼函數就直接返回-EAGAIN,要求用戶重新執行ioctl;否則的話,就通過當前線程就通過wait_event_interruptible_exclusive函數進入休眠狀態,等待請求到來再喚醒了。

至此,我們就從源代碼一步一步地分析完Service Manager是如何成為Android進程間通信(IPC)機制Binder守護進程的了。總結一下,Service Manager是成為Android進程間通信(IPC)機制Binder守護進程的過程是這樣的:

1. 打開/dev/binder文件:open("/dev/binder", O_RDWR);

2. 建立128K內存映射:mmap(NULL, mapsize, PROT_READ, MAP_PRIVATE, bs->fd, 0);

3. 通知Binder驅動程序它是守護進程:binder_become_context_manager(bs);

4. 進入循環等待請求的到來:binder_loop(bs, svcmgr_handler);

在這個過程中,在Binder驅動程序中建立了一個struct binder_proc結構、一個struct binder_thread結構和一個struct binder_node結構,這樣,Service Manager就在Android系統的進程間通信機制Binder擔負起守護進程的職責了。

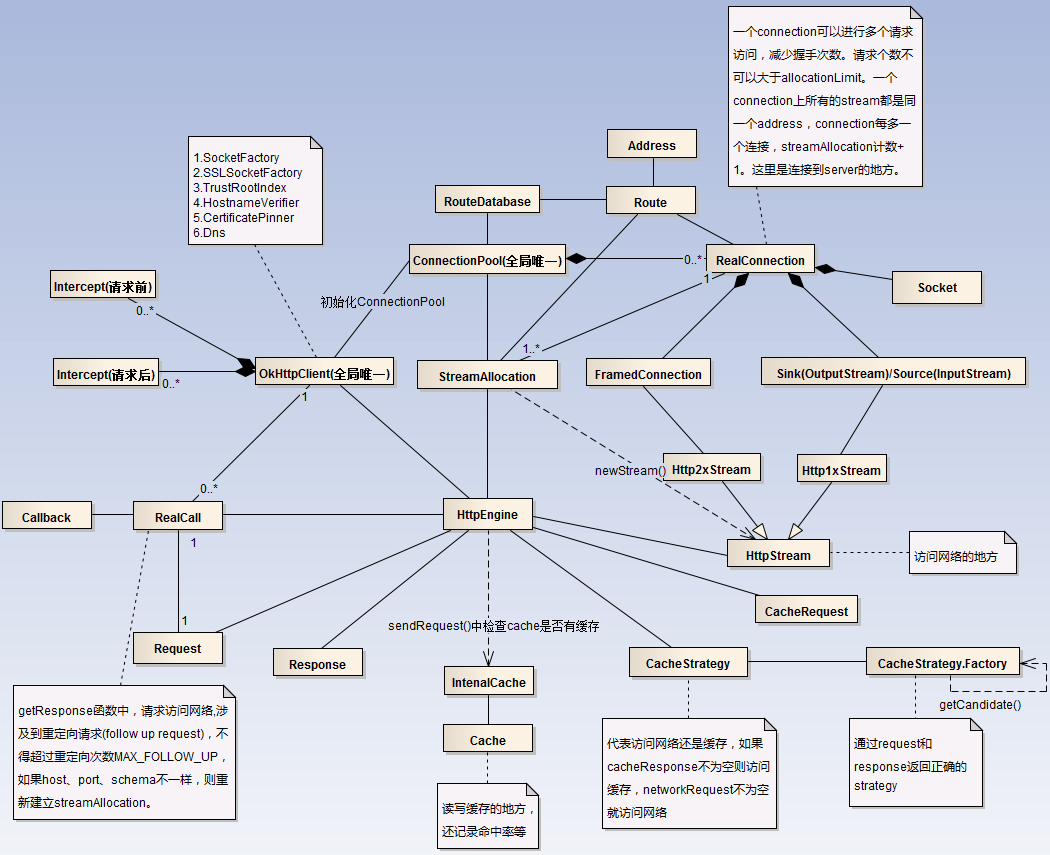

OkHttp完全解析

OkHttp完全解析

然後直接進入正題。看完上面這篇文章,主要理解的幾個點:外部通過構造Request,初始化OkHttpClient,並由兩者共同構造出Call。 訪問網絡通過Call,Ca

Android5.1.+ getRunningAppProcesses()獲取運行中進程(第三方開源庫)

Android5.1.+ getRunningAppProcesses()獲取運行中進程(第三方開源庫)

google可能為了安全考慮,在5.1.+後調用activitymanager.getRunningAppProcesses()方法只能返回你自己應用的進程,那如何在5.

Android ViewPager實現圖片輪播效果

Android ViewPager實現圖片輪播效果

在app中圖片的輪播顯示可以說是非常常見的實現效果了,其實現原理不過是利用ViewPager,然後利用handler每隔一定的時間將ViewPager的currentIt

【Android】ListView、RecyclerView、ScrollView裡嵌套ListView 相對優雅的解決方案:NestFullListView

【Android】ListView、RecyclerView、ScrollView裡嵌套ListView 相對優雅的解決方案:NestFullListView

一 背景概述:ScrollView裡嵌套ListView,一直是Android開發者(反正至少是我們組)最討厭的設計之一,完美打破ListView(RecyclerVie